目录

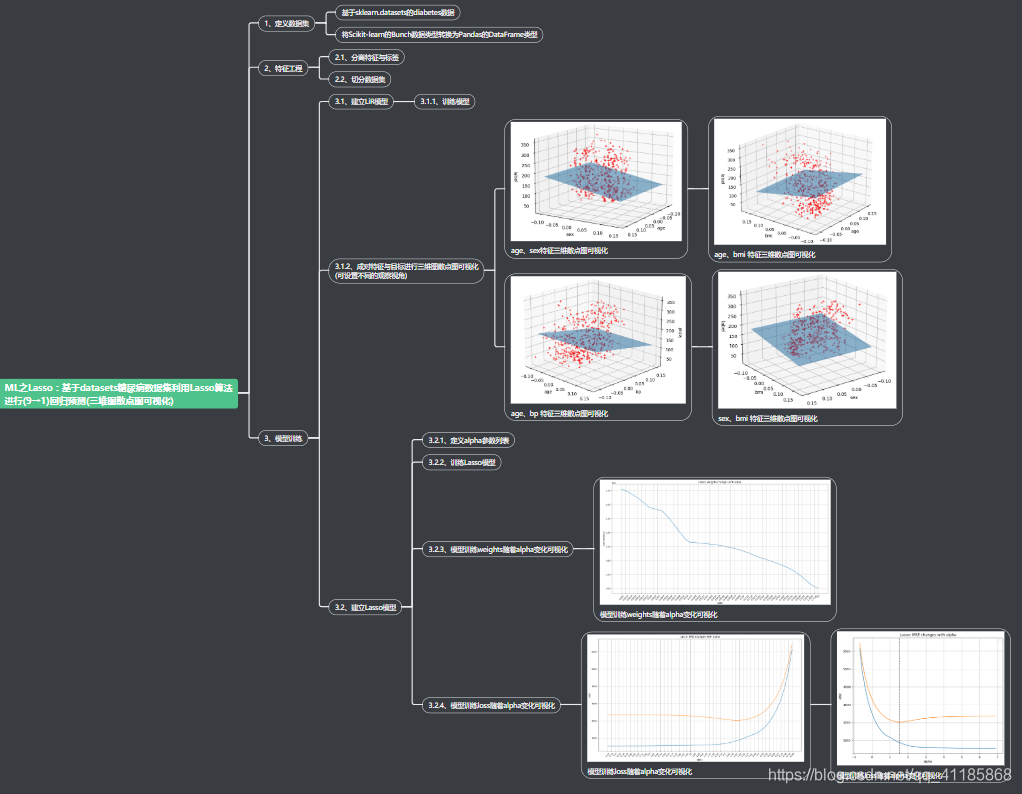

基于datasets糖尿病数据集利用LiR和Lasso算法进行(9→1)回归预测(三维图散点图可视化)

相关文章

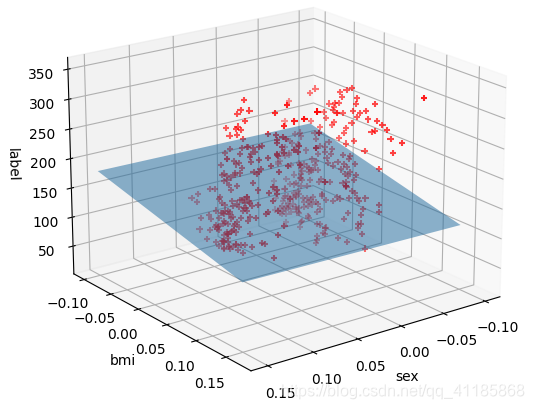

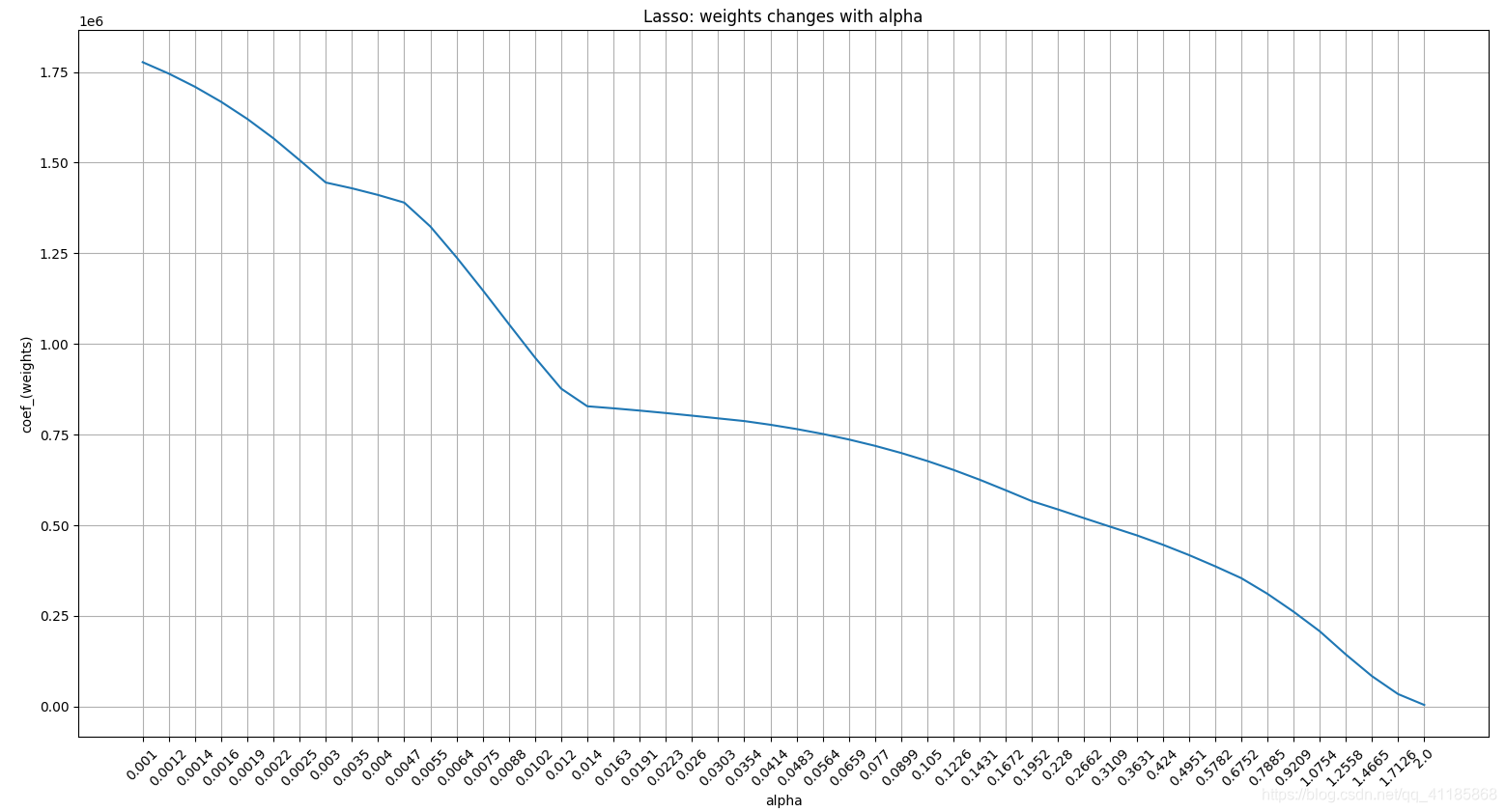

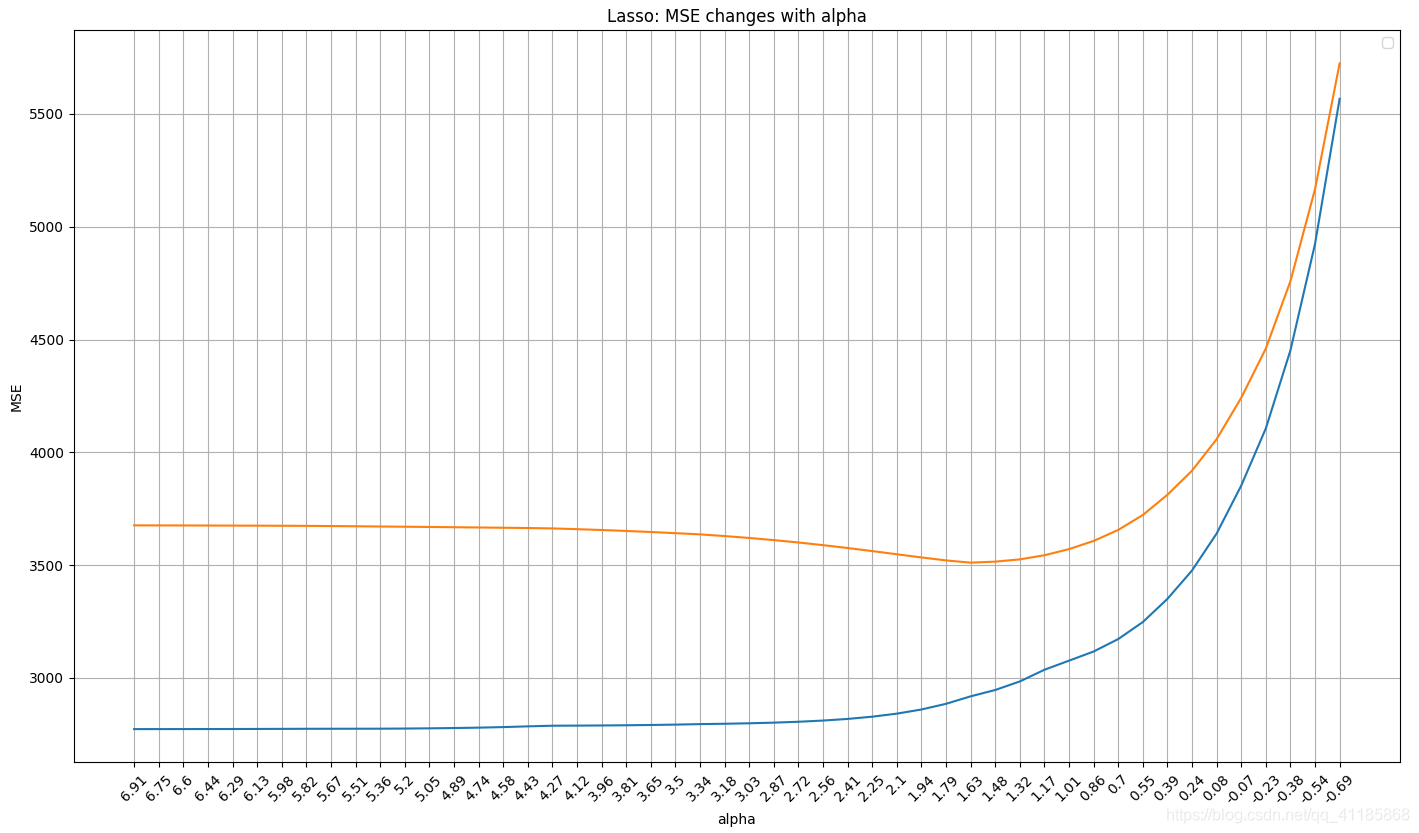

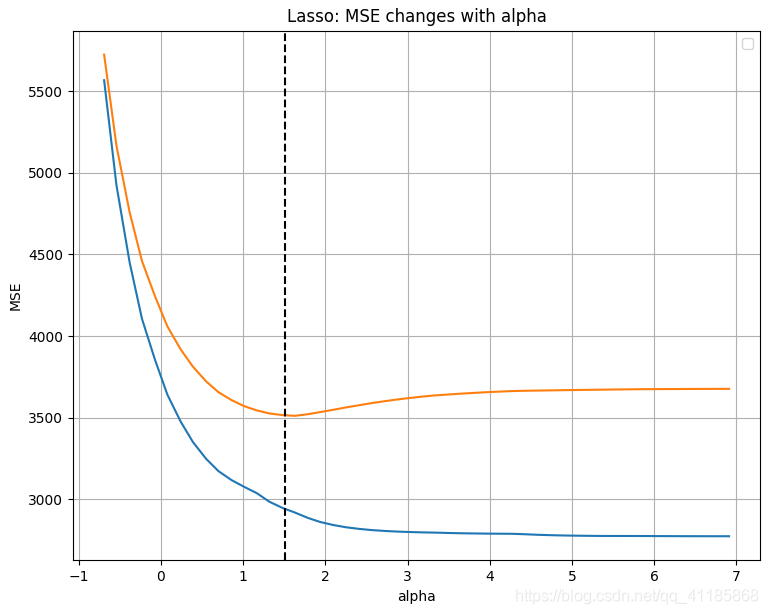

ML之LiR&Lasso:基于datasets糖尿病数据集利用LiR和Lasso算法进行(9→1)回归预测(三维图散点图可视化)

ML之LiR&Lasso:基于datasets糖尿病数据集利用LiR和Lasso算法进行(9→1)回归预测(三维图散点图可视化)实现

基于datasets糖尿病数据集利用LiR和Lasso算法进行(9→1)回归预测(三维图散点图可视化)

设计思路

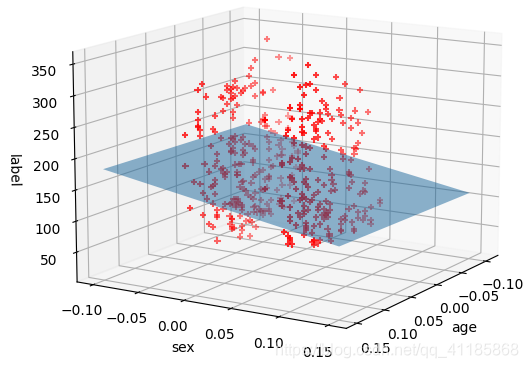

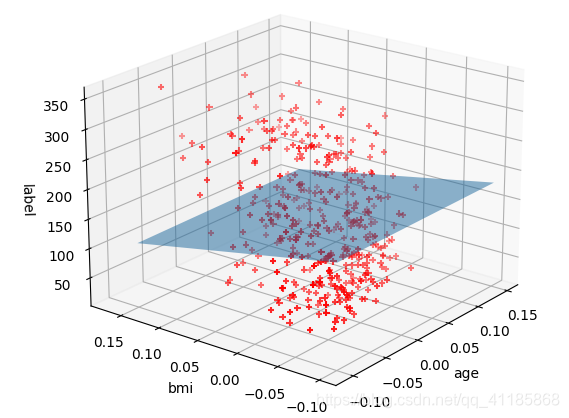

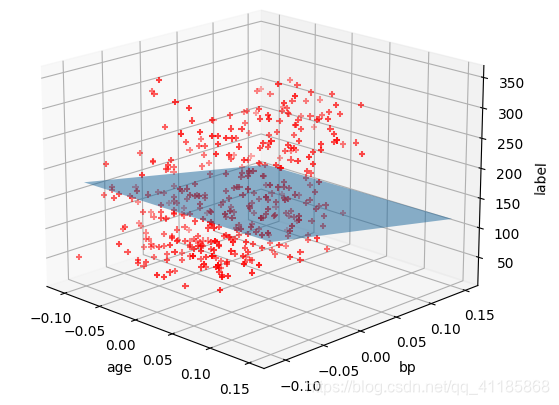

输出结果

Lasso核心代码

1. class Lasso Found at: sklearn.linear_model._coordinate_descent 2. 3. class Lasso(ElasticNet): 4. """Linear Model trained with L1 prior as regularizer (aka the Lasso) 5. 6. The optimization objective for Lasso is:: 7. 8. (1 / (2 * n_samples)) * ||y - Xw||^2_2 + alpha * ||w||_1 9. 10. Technically the Lasso model is optimizing the same objective function as 11. the Elastic Net with ``l1_ratio=1.0`` (no L2 penalty). 12. 13. Read more in the :ref:`User Guide <lasso>`. 14. 15. Parameters 16. ---------- 17. alpha : float, default=1.0 18. Constant that multiplies the L1 term. Defaults to 1.0. 19. ``alpha = 0`` is equivalent to an ordinary least square, solved 20. by the :class:`LinearRegression` object. For numerical 21. reasons, using ``alpha = 0`` with the ``Lasso`` object is not advised. 22. Given this, you should use the :class:`LinearRegression` object. 23. 24. fit_intercept : bool, default=True 25. Whether to calculate the intercept for this model. If set 26. to False, no intercept will be used in calculations 27. (i.e. data is expected to be centered). 28. 29. normalize : bool, default=False 30. This parameter is ignored when ``fit_intercept`` is set to False. 31. If True, the regressors X will be normalized before regression by 32. subtracting the mean and dividing by the l2-norm. 33. If you wish to standardize, please use 34. :class:`sklearn.preprocessing.StandardScaler` before calling ``fit`` 35. on an estimator with ``normalize=False``. 36. 37. precompute : 'auto', bool or array-like of shape (n_features, n_features),\ 38. default=False 39. Whether to use a precomputed Gram matrix to speed up 40. calculations. If set to ``'auto'`` let us decide. The Gram 41. matrix can also be passed as argument. For sparse input 42. this option is always ``True`` to preserve sparsity. 43. 44. copy_X : bool, default=True 45. If ``True``, X will be copied; else, it may be overwritten. 46. 47. max_iter : int, default=1000 48. The maximum number of iterations 49. 50. tol : float, default=1e-4 51. The tolerance for the optimization: if the updates are 52. smaller than ``tol``, the optimization code checks the 53. dual gap for optimality and continues until it is smaller 54. than ``tol``. 55. 56. warm_start : bool, default=False 57. When set to True, reuse the solution of the previous call to fit as 58. initialization, otherwise, just erase the previous solution. 59. See :term:`the Glossary <warm_start>`. 60. 61. positive : bool, default=False 62. When set to ``True``, forces the coefficients to be positive. 63. 64. random_state : int, RandomState instance, default=None 65. The seed of the pseudo random number generator that selects a 66. random 67. feature to update. Used when ``selection`` == 'random'. 68. Pass an int for reproducible output across multiple function calls. 69. See :term:`Glossary <random_state>`. 70. 71. selection : {'cyclic', 'random'}, default='cyclic' 72. If set to 'random', a random coefficient is updated every iteration 73. rather than looping over features sequentially by default. This 74. (setting to 'random') often leads to significantly faster convergence 75. especially when tol is higher than 1e-4. 76. 77. Attributes 78. ---------- 79. coef_ : ndarray of shape (n_features,) or (n_targets, n_features) 80. parameter vector (w in the cost function formula) 81. 82. sparse_coef_ : sparse matrix of shape (n_features, 1) or \ 83. (n_targets, n_features) 84. ``sparse_coef_`` is a readonly property derived from ``coef_`` 85. 86. intercept_ : float or ndarray of shape (n_targets,) 87. independent term in decision function. 88. 89. n_iter_ : int or list of int 90. number of iterations run by the coordinate descent solver to reach 91. the specified tolerance. 92. 93. Examples 94. -------- 95. >>> from sklearn import linear_model 96. >>> clf = linear_model.Lasso(alpha=0.1) 97. >>> clf.fit([[0,0], [1, 1], [2, 2]], [0, 1, 2]) 98. Lasso(alpha=0.1) 99. >>> print(clf.coef_) 100. [0.85 0. ] 101. >>> print(clf.intercept_) 102. 0.15... 103. 104. See also 105. -------- 106. lars_path 107. lasso_path 108. LassoLars 109. LassoCV 110. LassoLarsCV 111. sklearn.decomposition.sparse_encode 112. 113. Notes 114. ----- 115. The algorithm used to fit the model is coordinate descent. 116. 117. To avoid unnecessary memory duplication the X argument of the fit 118. method 119. should be directly passed as a Fortran-contiguous numpy array. 120. """ 121. path = staticmethod(enet_path) 122. @_deprecate_positional_args 123. def __init__(self, alpha=1.0, *, fit_intercept=True, normalize=False, 124. precompute=False, copy_X=True, max_iter=1000, 125. tol=1e-4, warm_start=False, positive=False, 126. random_state=None, selection='cyclic'): 127. super().__init__(alpha=alpha, l1_ratio=1.0, fit_intercept=fit_intercept, 128. normalize=normalize, precompute=precompute, copy_X=copy_X, 129. max_iter=max_iter, tol=tol, warm_start=warm_start, positive=positive, 130. random_state=random_state, selection=selection) 131. 132. 133. ###################################################### 134. ######################### 135. # Functions for CV with paths functions