背景

本文简要介绍如何使用 Triton 部署 BERT模型,主要参考 NVIDIA/DeepLearningExamples

更多、更及时内容欢迎留意微信公众号: 小窗幽记机器学习

准备工作

下载数据

进入到/data/DeepLearningExamples-master/PyTorch/LanguageModeling/BERT/data/squad后,下载数据:

bash ./squad_download.sh

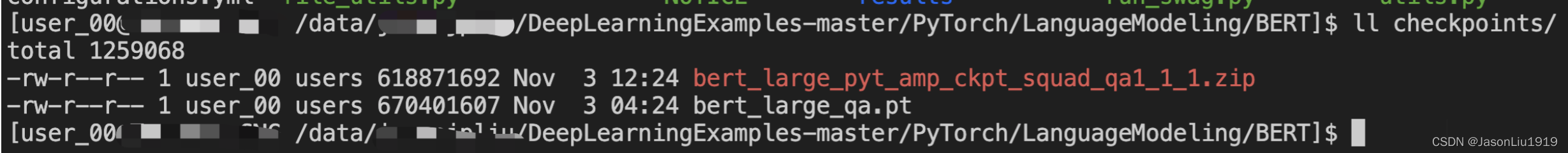

下载模型

wget --content-disposition https://api.ngc.nvidia.com/v2/models/nvidia/bert_large_pyt_amp_ckpt_squad_qa1_1/versions/1/zip -O bert_large_pyt_amp_ckpt_squad_qa1_1_1.zip

由于各个脚本使用的是bert_qa.pt,所以,对上述模型文件进行重命名。

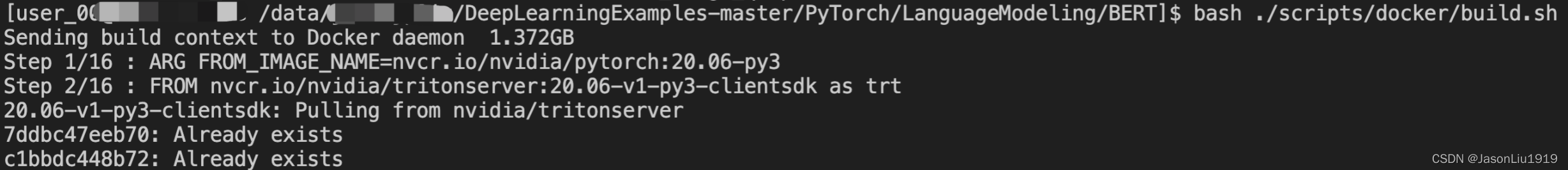

构建容器

bash ./scripts/docker/build.sh

Processing triggers for libc-bin (2.27-3ubuntu1) ...

Removing intermediate container 89010b0a75b2

---> 562bcc14dbfa

Step 15/15 : COPY . .

---> 23bac3585a43

Successfully built 23bac3585a43

Successfully tagged bert:latest

模型部署

将 checkpoint 导出为 torchscript

在宿主机(不需要容器内部)下,进入DeepLearningExamples-master/PyTorch/LanguageModeling/BERT执行下述脚本将 checkpoint 转为 torchscript:

bash ./triton/export_model.sh

转换过程状态:

=============

== PyTorch ==

=============

NVIDIA Release 20.06 (build 13419386)

PyTorch Version 1.6.0a0+9907a3e

Container image Copyright (c) 2020, NVIDIA CORPORATION. All rights reserved.

Copyright (c) 2014-2020 Facebook Inc.

Copyright (c) 2011-2014 Idiap Research Institute (Ronan Collobert)

Copyright (c) 2012-2014 Deepmind Technologies (Koray Kavukcuoglu)

Copyright (c) 2011-2012 NEC Laboratories America (Koray Kavukcuoglu)

Copyright (c) 2011-2013 NYU (Clement Farabet)

Copyright (c) 2006-2010 NEC Laboratories America (Ronan Collobert, Leon Bottou, Iain Melvin, Jason Weston)

Copyright (c) 2006 Idiap Research Institute (Samy Bengio)

Copyright (c) 2001-2004 Idiap Research Institute (Ronan Collobert, Samy Bengio, Johnny Mariethoz)

Copyright (c) 2015 Google Inc.

Copyright (c) 2015 Yangqing Jia

Copyright (c) 2013-2016 The Caffe contributors

All rights reserved.

Various files include modifications (c) NVIDIA CORPORATION. All rights reserved.

NVIDIA modifications are covered by the license terms that apply to the underlying project or file.

NOTE: Legacy NVIDIA Driver detected. Compatibility mode ENABLED.

NOTE: MOFED driver for multi-node communication was not detected.

Multi-node communication performance may be reduced.

deploying model bertQA-ts-script in format pytorch_libtorch

/opt/conda/lib/python3.6/site-packages/torch/jit/_recursive.py:160: UserWarning: 'bias' was found in ScriptModule constants, but it is a non-constant parameter. Consider removing it.

" but it is a non-constant {}. Consider removing it.".format(name, hint))

conversion correctness test results

-----------------------------------

maximal absolute error over dataset (L_inf): 0.0322265625

average L_inf error over output tensors: 0.02264404296875

variance of L_inf error over output tensors: 5.4970383644104004e-05

stddev of L_inf error over output tensors: 0.00741420148391612

time of error check of native model: 0.8040032386779785 seconds

time of error check of ts model: 1.7353665828704834 seconds

done

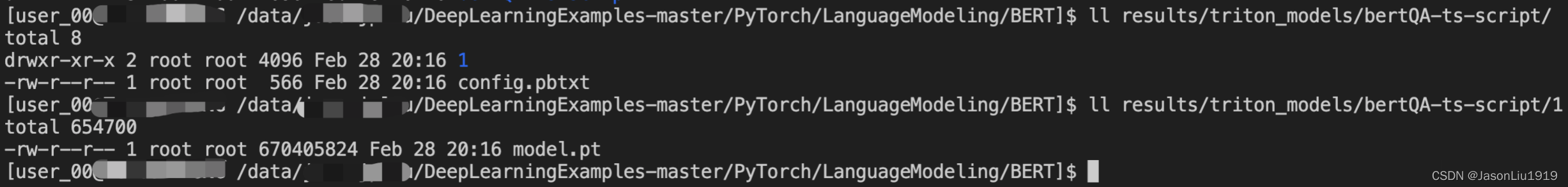

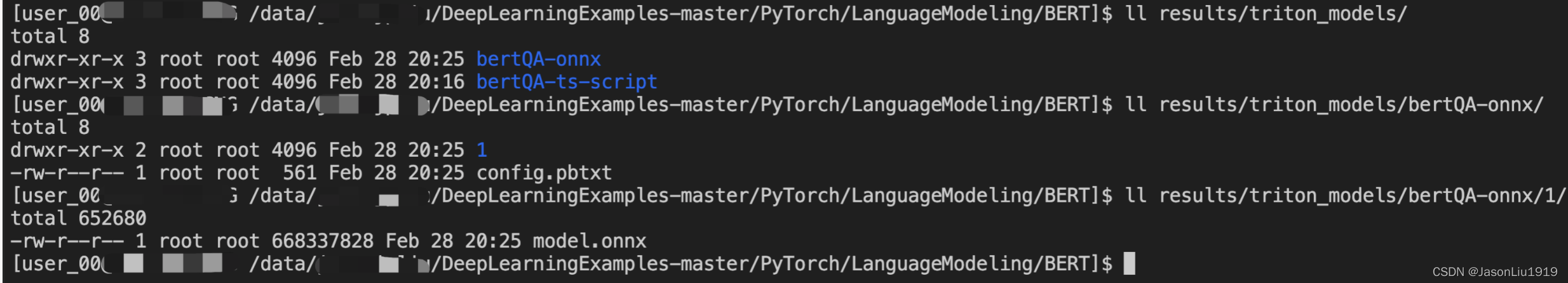

模型格式转换后,待部署的Triton模型将存于BERT/results/triton_models。

./triton/export_model.sh中EXPORT_FORMAT值为ts-script表示转为torchscript格式。如果想要以ONNX格式部署,则可以将./triton/export_model.sh中的EXPORT_FORMAT值设置为onnx。此外,还要注意相应改动triton_model_name,比如改为bertQA-onnx,以对新转换的模型进行合适命名。

启动 Triton server

可以通过执行以下命令来启动Triton server:

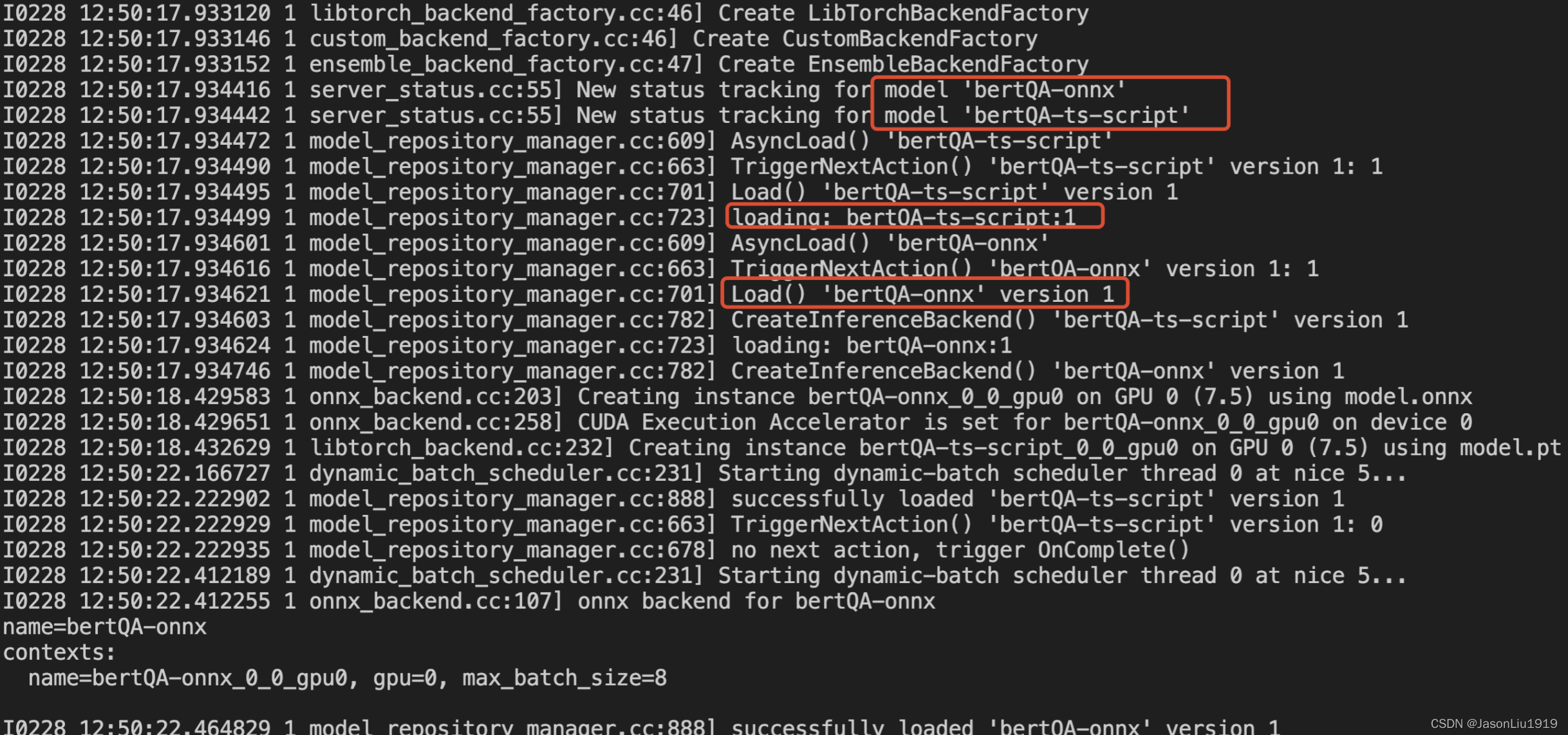

docker run --rm --gpus device=0 --ipc=host --network=host -p 8000:8000 -p 8001:8001 -p 8002:8002 -v $PWD/results/triton_models:/models nvcr.io/nvidia/tritonserver:20.06-v1-py3 trtserver --model-store=/models --log-verbose=1

由于上述镜像nvcr.io/nvidia/tritonserver:20.06-v1-py3本地尚未拉取,所以执行上述命令后,会优先拉取该镜像。

另外,注意这里指定的模型位置是--model-store=/models映射的是./results/triton_models,且该目录下有2个模型,所以服务启动的时候会将2个模型都加载:

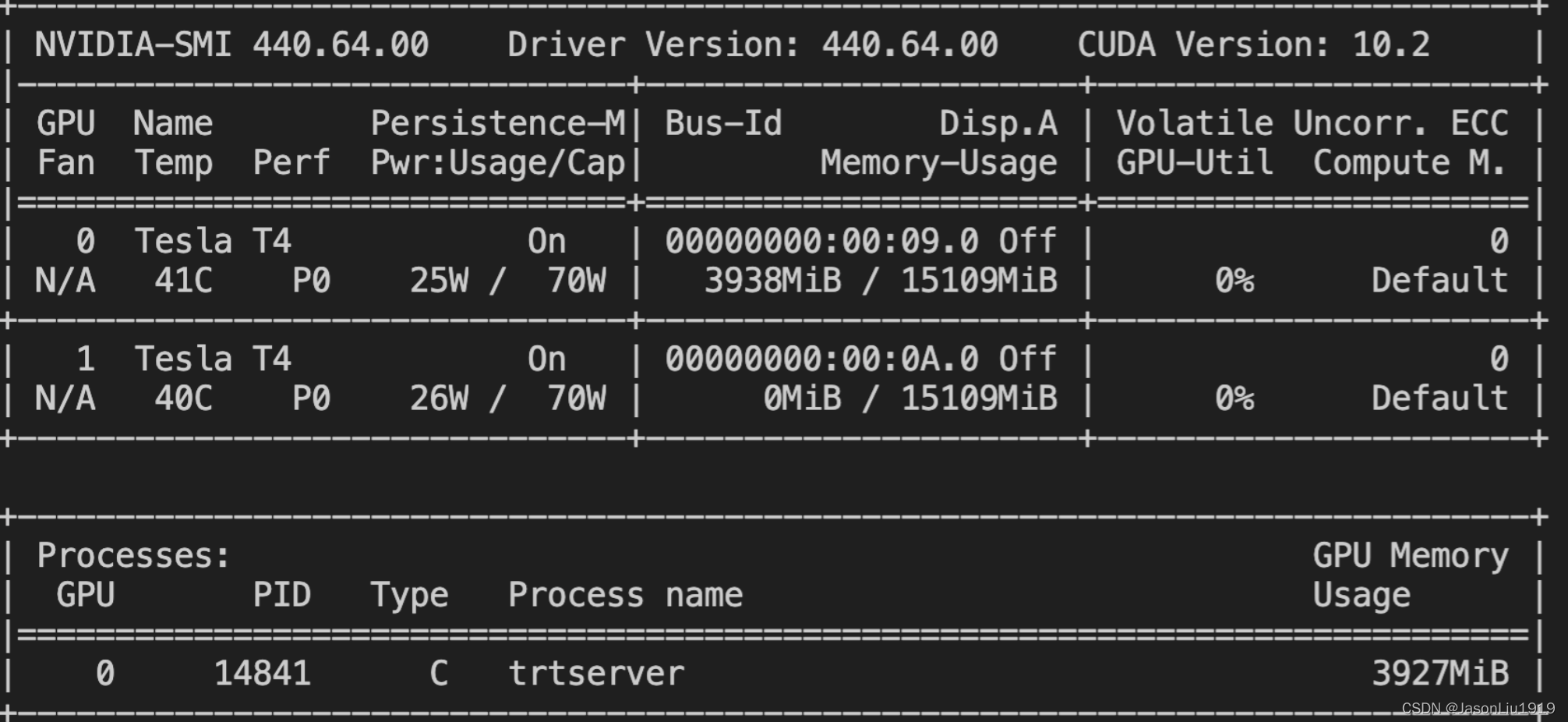

服务启动后,可以看下显存的占用情况:

启动自定义的Triton client

./triton/client.py为自定义的client代码。

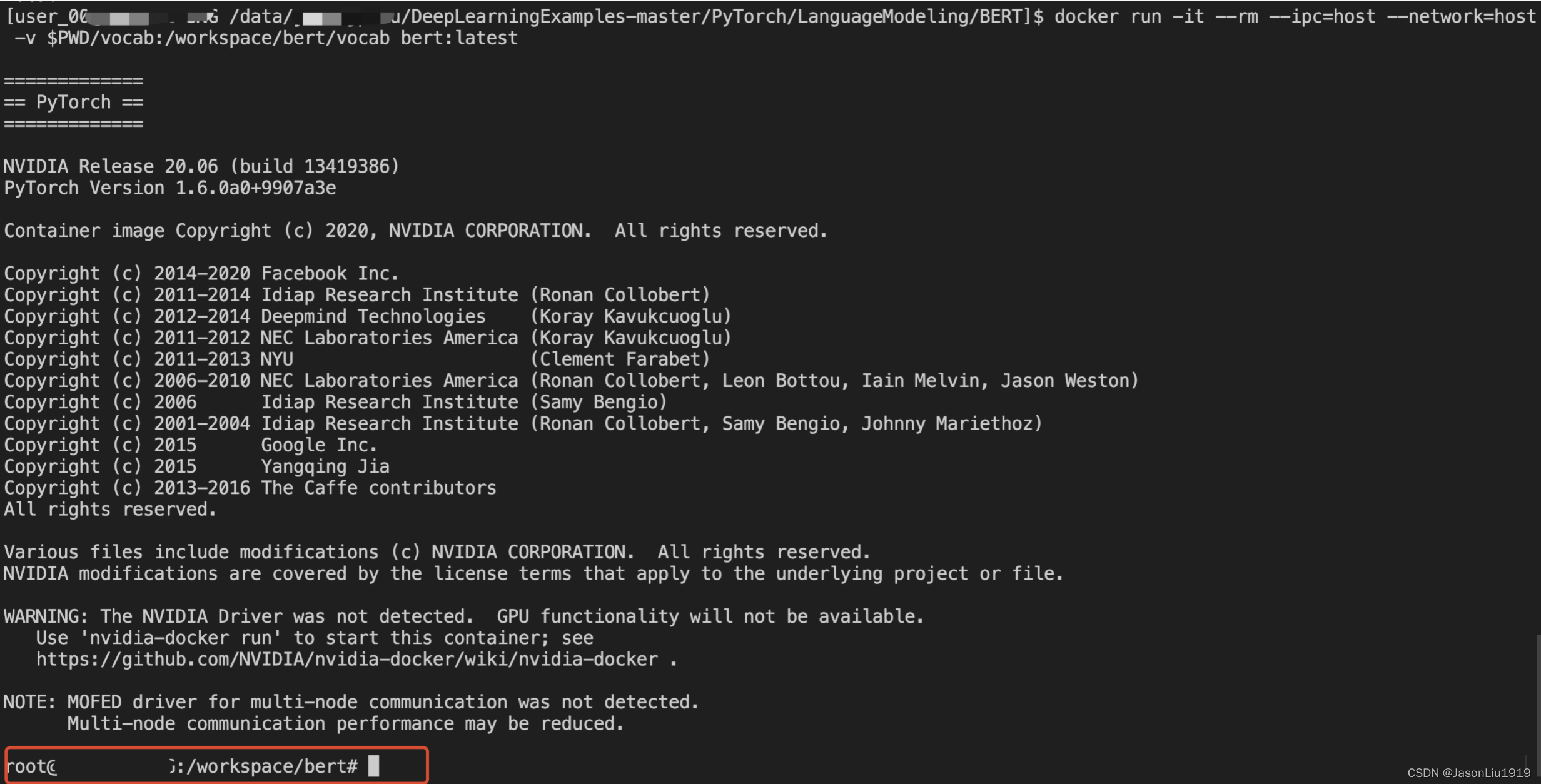

Step1: 启动一个 client 容器

docker run -it --rm --ipc=host --network=host -v $PWD/vocab:/workspace/bert/vocab bert:latest

PS:

启动客户端无需指定GPU,且上述的启动方式,当在终端直接退出该容器后,该容器自动销毁。

如此便启动了一个容器,并进入容器当中。

Step2: 启动 client

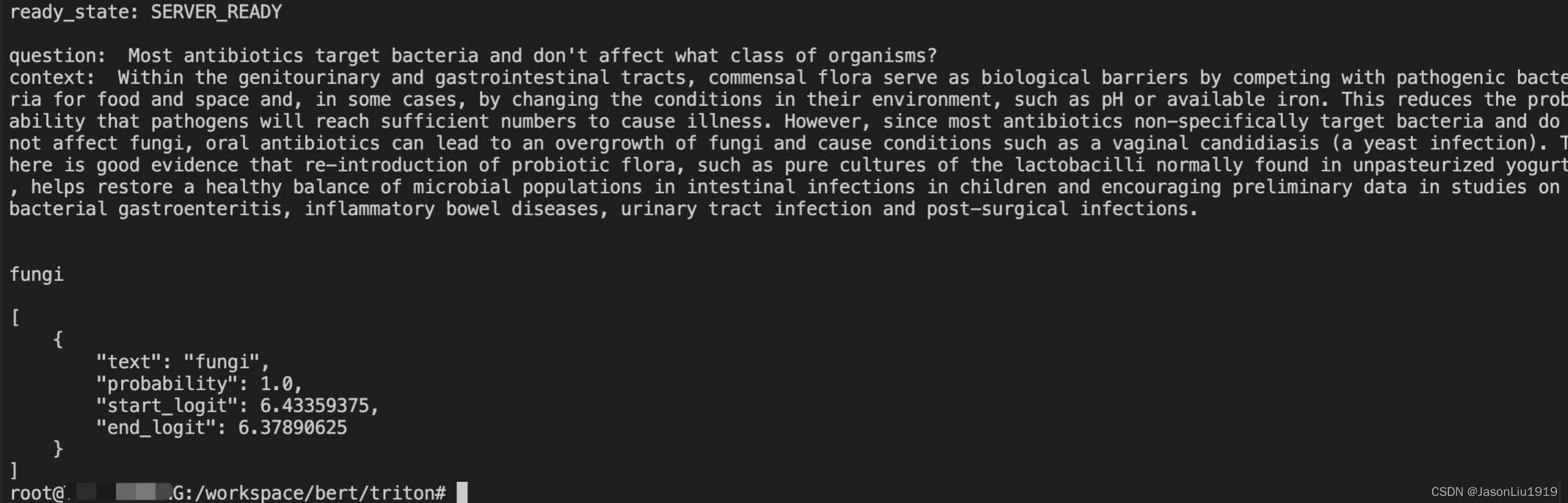

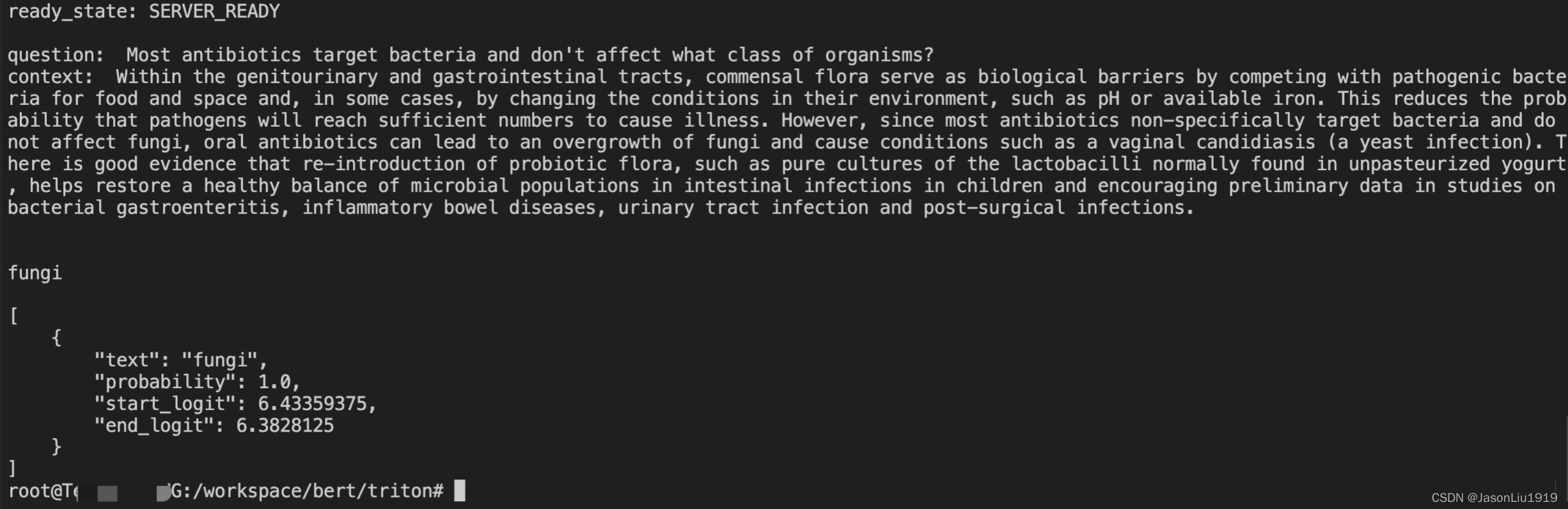

进入到 client 代码目录:cd /workspace/bert/triton/,再运行如下代码,对 bertQA-ts-script 版模型进行请求:

python client.py --do_lower_case --version_2_with_negative --vocab_file=../vocab/vocab --triton-model-name=bertQA-ts-script

此时,client 端将向已在运行的 Triton server 发送一个请求,Triton server 接收请求并处理后,将请求返回。如果想输入自定义的文本段落和问题,则只需在运行client.py脚本时搭配--question和--context参数并传入对应的内容。此外,可以通过--triton-model-name指定特定的模型。这里服务端加载了2个模型,所以client也可以对 onnx 版模型进行请求:

python client.py --do_lower_case --version_2_with_negative --vocab_file=../vocab/vocab --triton-model-name=bertQA-onnx

模型部署后的评估:Squad1.1

部署并评估模型,可以在宿主机下执行以下命令

bash ./triton/evaluate.sh

PS:

在部署和评测之前,先将之前启动的 Triton server 关闭,否则端口被冲突。

服务启动和评测运行状态如下:

=============

== PyTorch ==

=============

NVIDIA Release 20.06 (build 13419386)

PyTorch Version 1.6.0a0+9907a3e

Container image Copyright (c) 2020, NVIDIA CORPORATION. All rights reserved.

Copyright (c) 2014-2020 Facebook Inc.

Copyright (c) 2011-2014 Idiap Research Institute (Ronan Collobert)

Copyright (c) 2012-2014 Deepmind Technologies (Koray Kavukcuoglu)

Copyright (c) 2011-2012 NEC Laboratories America (Koray Kavukcuoglu)

Copyright (c) 2011-2013 NYU (Clement Farabet)

Copyright (c) 2006-2010 NEC Laboratories America (Ronan Collobert, Leon Bottou, Iain Melvin, Jason Weston)

Copyright (c) 2006 Idiap Research Institute (Samy Bengio)

Copyright (c) 2001-2004 Idiap Research Institute (Ronan Collobert, Samy Bengio, Johnny Mariethoz)

Copyright (c) 2015 Google Inc.

Copyright (c) 2015 Yangqing Jia

Copyright (c) 2013-2016 The Caffe contributors

All rights reserved.

Various files include modifications (c) NVIDIA CORPORATION. All rights reserved.

NVIDIA modifications are covered by the license terms that apply to the underlying project or file.

NOTE: Legacy NVIDIA Driver detected. Compatibility mode ENABLED.

NOTE: MOFED driver for multi-node communication was not detected.

Multi-node communication performance may be reduced.

deploying model bert_large_fp32 in format pytorch_libtorch

/opt/conda/lib/python3.6/site-packages/torch/jit/_recursive.py:160: UserWarning: 'bias' was found in ScriptModule constants, but it is a non-constant parameter. Consider removing it.

" but it is a non-constant {}. Consider removing it.".format(name, hint))

conversion correctness test results

-----------------------------------

maximal absolute error over dataset (L_inf): 1.4185905456542969e-05

average L_inf error over output tensors: 1.0482966899871826e-05

variance of L_inf error over output tensors: 8.773056355456296e-12

stddev of L_inf error over output tensors: 2.961934562993635e-06

time of error check of native model: 1.596167802810669 seconds

time of error check of ts model: 2.414717435836792 seconds

done

Starting server...

Waiting for TRITON Server to be ready at http://localhost:8000...

000

.......TRITON Server is ready!

=============

== PyTorch ==

=============

NVIDIA Release 20.06 (build 13419386)

PyTorch Version 1.6.0a0+9907a3e

Container image Copyright (c) 2020, NVIDIA CORPORATION. All rights reserved.

Copyright (c) 2014-2020 Facebook Inc.

Copyright (c) 2011-2014 Idiap Research Institute (Ronan Collobert)

Copyright (c) 2012-2014 Deepmind Technologies (Koray Kavukcuoglu)

Copyright (c) 2011-2012 NEC Laboratories America (Koray Kavukcuoglu)

Copyright (c) 2011-2013 NYU (Clement Farabet)

Copyright (c) 2006-2010 NEC Laboratories America (Ronan Collobert, Leon Bottou, Iain Melvin, Jason Weston)

Copyright (c) 2006 Idiap Research Institute (Samy Bengio)

Copyright (c) 2001-2004 Idiap Research Institute (Ronan Collobert, Samy Bengio, Johnny Mariethoz)

Copyright (c) 2015 Google Inc.

Copyright (c) 2015 Yangqing Jia

Copyright (c) 2013-2016 The Caffe contributors

All rights reserved.

Various files include modifications (c) NVIDIA CORPORATION. All rights reserved.

NVIDIA modifications are covered by the license terms that apply to the underlying project or file.

NOTE: Legacy NVIDIA Driver detected. Compatibility mode ENABLED.

NOTE: MOFED driver for multi-node communication was not detected.

Multi-node communication performance may be reduced.

Sending Requests: 100%|███████████████████████████████████████████████████████████████████████████| 10833/10833 [04:20<00:00, 27.84sentences/s-----------------------------█████████████████████████████████████████████████████████████████████▉| 10832/10833 [14:29<00:00, 12.28sentences/s]

Individual Time Runs

Total Time: 869886.3623142242 ms

-----------------------------

-----------------------------

Total Inference Time = 432310.23 forSentences processed = 10833

Throughput Average (sentences/sec) = 12.45

Throughput Average (batches/sec) = 1.56

-----------------------------

-----------------------------

Summary Statistics

Batch size = 8

Sequence Length = 384

Latency Confidence Level 95 (ms) = 594040.61627388

Latency Confidence Level 99 (ms) = 615392.275094986

Latency Confidence Level 100 (ms) = 619993.6480522156

Latency Average (ms) = 319048.1366518239

-----------------------------

Sending Requests: 100%|███████████████████████████████████████████████████████████████████████████| 10833/10833 [15:16<00:00, 11.82sentences/s]

Processed Requests: 100%|█████████████████████████████████████████████████████████████████████████| 10833/10833 [15:16<00:00, 11.82sentences/s]

=============

== PyTorch ==

=============

NVIDIA Release 20.06 (build 13419386)

PyTorch Version 1.6.0a0+9907a3e

Container image Copyright (c) 2020, NVIDIA CORPORATION. All rights reserved.

Copyright (c) 2014-2020 Facebook Inc.

Copyright (c) 2011-2014 Idiap Research Institute (Ronan Collobert)

Copyright (c) 2012-2014 Deepmind Technologies (Koray Kavukcuoglu)

Copyright (c) 2011-2012 NEC Laboratories America (Koray Kavukcuoglu)

Copyright (c) 2011-2013 NYU (Clement Farabet)

Copyright (c) 2006-2010 NEC Laboratories America (Ronan Collobert, Leon Bottou, Iain Melvin, Jason Weston)

Copyright (c) 2006 Idiap Research Institute (Samy Bengio)

Copyright (c) 2001-2004 Idiap Research Institute (Ronan Collobert, Samy Bengio, Johnny Mariethoz)

Copyright (c) 2015 Google Inc.

Copyright (c) 2015 Yangqing Jia

Copyright (c) 2013-2016 The Caffe contributors

All rights reserved.

Various files include modifications (c) NVIDIA CORPORATION. All rights reserved.

NVIDIA modifications are covered by the license terms that apply to the underlying project or file.

NOTE: Legacy NVIDIA Driver detected. Compatibility mode ENABLED.

NOTE: MOFED driver for multi-node communication was not detected.

Multi-node communication performance may be reduced.

trt_server_cont

tritonnet

需要注意的是,默认下以torchscript格式部署服务,并以Squad1.1数据集进行评测。如果想对onnx格式模型进行评测,将/triton/evaluate.sh中的EXPORT_FORMAT值从ts-script改为onnx。

Various files include modifications (c) NVIDIA CORPORATION. All rights reserved.

NVIDIA modifications are covered by the license terms that apply to the underlying project or file.

NOTE: Legacy NVIDIA Driver detected. Compatibility mode ENABLED.

NOTE: MOFED driver for multi-node communication was not detected.

Multi-node communication performance may be reduced.

deploying model bert_large_fp32 in format onnxruntime_onnx

/opt/conda/lib/python3.6/site-packages/torch/onnx/utils.py:955: UserWarning: No names were found for specified dynamic axes of provided input.Automatically generated names will be applied to each dynamic axes of input input__0

'Automatically generated names will be applied to each dynamic axes of input {}'.format(key))

/opt/conda/lib/python3.6/site-packages/torch/onnx/utils.py:955: UserWarning: No names were found for specified dynamic axes of provided input.Automatically generated names will be applied to each dynamic axes of input input__1

'Automatically generated names will be applied to each dynamic axes of input {}'.format(key))

/opt/conda/lib/python3.6/site-packages/torch/onnx/utils.py:955: UserWarning: No names were found for specified dynamic axes of provided input.Automatically generated names will be applied to each dynamic axes of input input__2

'Automatically generated names will be applied to each dynamic axes of input {}'.format(key))

/opt/conda/lib/python3.6/site-packages/torch/onnx/utils.py:955: UserWarning: No names were found for specified dynamic axes of provided input.Automatically generated names will be applied to each dynamic axes of input output__0

'Automatically generated names will be applied to each dynamic axes of input {}'.format(key))

/opt/conda/lib/python3.6/site-packages/torch/onnx/utils.py:955: UserWarning: No names were found for specified dynamic axes of provided input.Automatically generated names will be applied to each dynamic axes of input output__1

'Automatically generated names will be applied to each dynamic axes of input {}'.format(key))

[libprotobuf WARNING google/protobuf/io/coded_stream.cc:604] Reading dangerously large protocol message. If the message turns out to be larger than 2147483647 bytes, parsing will be halted for security reasons. To increase the limit (or to disable these warnings), see CodedInputStream::SetTotalBytesLimit() in google/protobuf/io/coded_stream.h.

[libprotobuf WARNING google/protobuf/io/coded_stream.cc:81] The total number of bytes read was 1336539136

conversion correctness test results

-----------------------------------

maximal absolute error over dataset (L_inf): 0.00022530555725097656

average L_inf error over output tensors: 0.0001377016305923462

variance of L_inf error over output tensors: 6.448256743378049e-09

stddev of L_inf error over output tensors: 8.030103824595327e-05

time of error check of native model: 1.2507586479187012 seconds

time of error check of onnx model: 76.80649089813232 seconds

done

Starting server...

Waiting for TRITON Server to be ready at http://localhost:8000...

000

.......TRITON Server is ready!

=============

== PyTorch ==

=============

NVIDIA Release 20.06 (build 13419386)

PyTorch Version 1.6.0a0+9907a3e

Container image Copyright (c) 2020, NVIDIA CORPORATION. All rights reserved.

Copyright (c) 2014-2020 Facebook Inc.

Copyright (c) 2011-2014 Idiap Research Institute (Ronan Collobert)

Copyright (c) 2012-2014 Deepmind Technologies (Koray Kavukcuoglu)

Copyright (c) 2011-2012 NEC Laboratories America (Koray Kavukcuoglu)

Copyright (c) 2011-2013 NYU (Clement Farabet)

Copyright (c) 2006-2010 NEC Laboratories America (Ronan Collobert, Leon Bottou, Iain Melvin, Jason Weston)

Copyright (c) 2006 Idiap Research Institute (Samy Bengio)

Copyright (c) 2001-2004 Idiap Research Institute (Ronan Collobert, Samy Bengio, Johnny Mariethoz)

Copyright (c) 2015 Google Inc.

Copyright (c) 2015 Yangqing Jia

Copyright (c) 2013-2016 The Caffe contributors

All rights reserved.

Various files include modifications (c) NVIDIA CORPORATION. All rights reserved.

NVIDIA modifications are covered by the license terms that apply to the underlying project or file.

NOTE: Legacy NVIDIA Driver detected. Compatibility mode ENABLED.

NOTE: MOFED driver for multi-node communication was not detected.

Multi-node communication performance may be reduced.

Sending Requests: 100%|███████████████████████████████████████████████████████████████████████████| 10833/10833 [04:40<00:00, 15.52sentences/s-----------------------------█████████████████████████████████████████████████████████████████████▉| 10832/10833 [14:23<00:00, 12.42sentences/s]

Individual Time Runs

Total Time: 863938.3265972137 ms

-----------------------------

-----------------------------

Total Inference Time = 418017.89 forSentences processed = 10833

Throughput Average (sentences/sec) = 12.54

Throughput Average (batches/sec) = 1.57

-----------------------------

-----------------------------

Summary Statistics

Batch size = 8

Sequence Length = 384

Latency Confidence Level 95 (ms) = 568533.2419872284

Latency Confidence Level 99 (ms) = 591532.5634479523

Latency Confidence Level 100 (ms) = 595446.0487365723

Latency Average (ms) = 308500.2912194087

-----------------------------

Sending Requests: 100%|███████████████████████████████████████████████████████████████████████████| 10833/10833 [15:10<00:00, 11.90sentences/s]

Processed Requests: 100%|█████████████████████████████████████████████████████████████████████████| 10833/10833 [15:10<00:00, 11.90sentences/s]

=============

== PyTorch ==

=============

NVIDIA Release 20.06 (build 13419386)

PyTorch Version 1.6.0a0+9907a3e

Container image Copyright (c) 2020, NVIDIA CORPORATION. All rights reserved.

Copyright (c) 2014-2020 Facebook Inc.

Copyright (c) 2011-2014 Idiap Research Institute (Ronan Collobert)

Copyright (c) 2012-2014 Deepmind Technologies (Koray Kavukcuoglu)

Copyright (c) 2011-2012 NEC Laboratories America (Koray Kavukcuoglu)

Copyright (c) 2011-2013 NYU (Clement Farabet)

Copyright (c) 2006-2010 NEC Laboratories America (Ronan Collobert, Leon Bottou, Iain Melvin, Jason Weston)

Copyright (c) 2006 Idiap Research Institute (Samy Bengio)

Copyright (c) 2001-2004 Idiap Research Institute (Ronan Collobert, Samy Bengio, Johnny Mariethoz)

Copyright (c) 2015 Google Inc.

Copyright (c) 2015 Yangqing Jia

Copyright (c) 2013-2016 The Caffe contributors

All rights reserved.

Various files include modifications (c) NVIDIA CORPORATION. All rights reserved.

NVIDIA modifications are covered by the license terms that apply to the underlying project or file.

NOTE: Legacy NVIDIA Driver detected. Compatibility mode ENABLED.

NOTE: MOFED driver for multi-node communication was not detected.

Multi-node communication performance may be reduced.

trt_server_cont

tritonnet

更多、更及时内容欢迎留意微信公众号: 小窗幽记机器学习