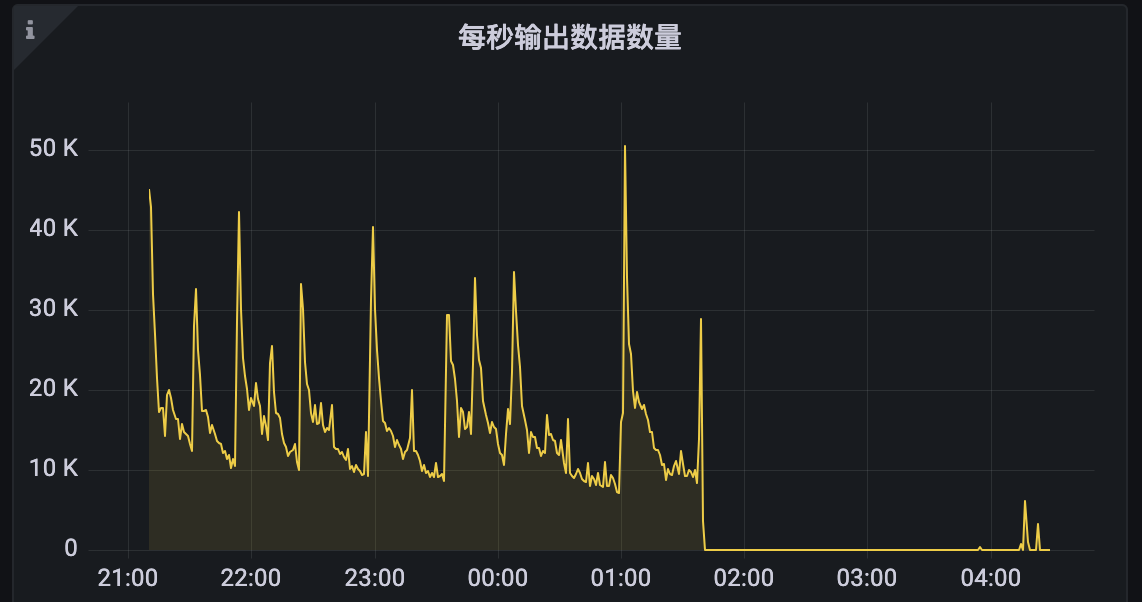

问题一:Flink CDC从这个task飙升开始,就没有读数据了呢?

Flink CDC从这个task飙升开始,就没有读数据了呢?

参考答案:

连接binlog服务异常,看来是有大批量删数据

关于本问题的更多回答可点击进行查看:

https://developer.aliyun.com/ask/579999

问题二:Flink CDC中cdc2.x一般需要配合flink什么版本使用,似乎用1.12的构建会有异常?

Flink CDC中cdc2.x一般需要配合flink什么版本使用,似乎用1.12的构建会有异常?

参考答案:

Flink CDC 2.x 版本需要与 Flink 1.12 及以上版本一起使用,因为Flink CDC是基于Flink流处理引擎构建的,而Flink的各个版本之间存在着API和功能性的差异。如果你尝试使用Flink 1.12以下的版本与Flink CDC 2.x一起使用,可能会遇到兼容性问题。此外,Flink社区开发的flink-cdc-connectors组件可以直接从MySQL、PostgreSQL等数据库中读取全量数据和增量变更数据,这也是基于Debezium的FlinkCDC工具的一大优势。

关于本问题的更多回答可点击进行查看:

https://developer.aliyun.com/ask/579998

问题三:Flink CDC跳读过程 其他task是空闲状态吗 如果跳读时间过长会不会有异常?

Flink CDC跳读过程 其他task是空闲状态吗 如果跳读时间过长会不会有异常?

参考答案:

"在所有的 Snapshot chunk 读完之后会发一个特殊的 Binlog chunk,该 chunk 里包含刚刚所有 Snapshot chunk 的汇报信息。Binlog Reader 会根据所有的 Snapshot chunk 汇报信息按照各自的位点进行跳读,跳读完后再进入一个纯粹的 binlog 读取。跳读就是需要考虑各个 snapshot chunk 读完全量时的 close 位点进行过滤,避免重复数据,纯 binlog 读就是在跳读完成后只要是属于目标表的 changelog 都读取。java.lang.RuntimeException: One or more fetchers have encountered exception

at org.apache.flink.connector.base.source.reader.fetcher.SplitFetcherManager.checkErrors(SplitFetcherManager.java:223)

at org.apache.flink.connector.base.source.reader.SourceReaderBase.getNextFetch(SourceReaderBase.java:154)

at org.apache.flink.connector.base.source.reader.SourceReaderBase.pollNext(SourceReaderBase.java:116)

at org.apache.flink.streaming.api.operators.SourceOperator.emitNext(SourceOperator.java:305)

at org.apache.flink.streaming.runtime.io.StreamTaskSourceInput.emitNext(StreamTaskSourceInput.java:69)

at org.apache.flink.streaming.runtime.io.StreamOneInputProcessor.processInput(StreamOneInputProcessor.java:66)

at org.apache.flink.streaming.runtime.tasks.StreamTask.processInput(StreamTask.java:423)

at org.apache.flink.streaming.runtime.tasks.mailbox.MailboxProcessor.runMailboxLoop(MailboxProcessor.java:204)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runMailboxLoop(StreamTask.java:684)

at org.apache.flink.streaming.runtime.tasks.StreamTask.executeInvoke(StreamTask.java:639)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runWithCleanUpOnFail(StreamTask.java:650)

at org.apache.flink.streaming.runtime.tasks.StreamTask.invoke(StreamTask.java:623)

at org.apache.flink.runtime.taskmanager.Task.doRun(Task.java:779)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:566)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.lang.RuntimeException: SplitFetcher thread 0 received unexpected exception while polling the records

at org.apache.flink.connector.base.source.reader.fetcher.SplitFetcher.runOnce(SplitFetcher.java:148)

at org.apache.flink.connector.base.source.reader.fetcher.SplitFetcher.run(SplitFetcher.java:103)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

... 1 more

Caused by: com.ververica.cdc.connectors.shaded.org.apache.kafka.connect.errors.ConnectException: An exception occurred in the change event producer. This connector will be stopped.

at io.debezium.pipeline.ErrorHandler.setProducerThrowable(ErrorHandler.java:42)

at io.debezium.connector.mysql.MySqlStreamingChangeEventSource$ReaderThreadLifecycleListener.onCommunicationFailure(MySqlStreamingChangeEventSource.java:1185)

at com.github.shyiko.mysql.binlog.BinaryLogClient.listenForEventPackets(BinaryLogClient.java:973)

at com.github.shyiko.mysql.binlog.BinaryLogClient.connect(BinaryLogClient.java:606)

at com.github.shyiko.mysql.binlog.BinaryLogClient$7.run(BinaryLogClient.java:850)

... 1 more

Caused by: io.debezium.DebeziumException

at io.debezium.connector.mysql.MySqlStreamingChangeEventSource.wrap(MySqlStreamingChangeEventSource.java:1146)

... 5 more

Caused by: java.io.EOFException

at com.github.shyiko.mysql.binlog.io.ByteArrayInputStream.read(ByteArrayInputStream.java:209)

at com.github.shyiko.mysql.binlog.io.ByteArrayInputStream.readInteger(ByteArrayInputStream.java:51)

at com.github.shyiko.mysql.binlog.event.deserialization.EventHeaderV4Deserializer.deserialize(EventHeaderV4Deserializer.java:35)

at com.github.shyiko.mysql.binlog.event.deserialization.EventHeaderV4Deserializer.deserialize(EventHeaderV4Deserializer.java:27)

at com.github.shyiko.mysql.binlog.event.deserialization.EventDeserializer.nextEvent(EventDeserializer.java:221)

at io.debezium.connector.mysql.MySqlStreamingChangeEventSource$1.nextEvent(MySqlStreamingChangeEventSource.java:233)

at com.github.shyiko.mysql.binlog.BinaryLogClient.listenForEventPackets(BinaryLogClient.java:945)

... 3 more

关于本问题的更多回答可点击进行查看:

https://developer.aliyun.com/ask/579997

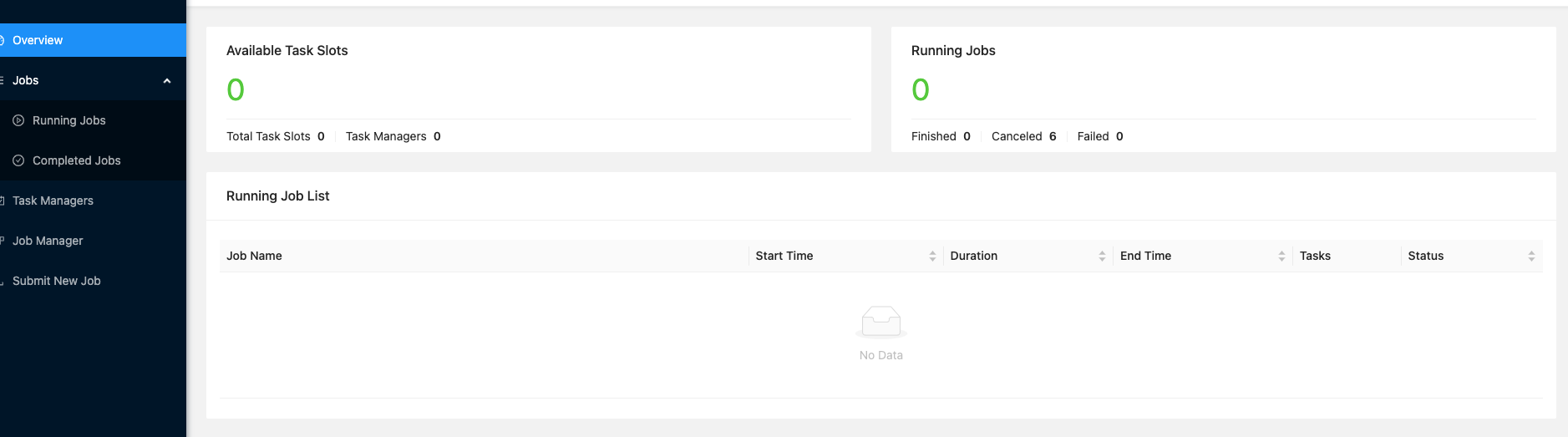

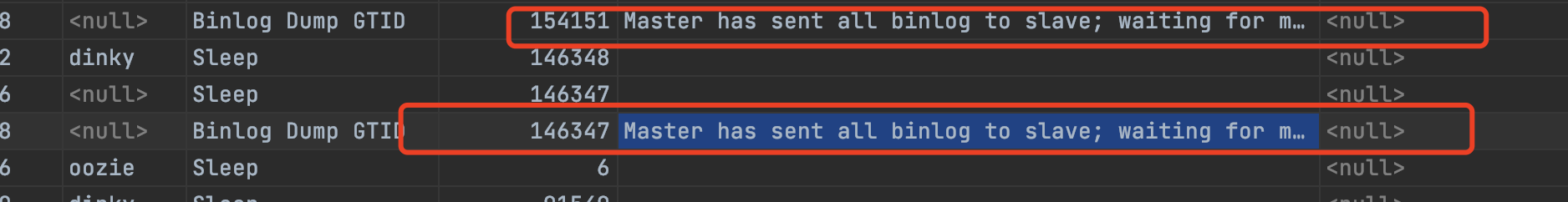

问题四:现在flink-cdc监听mysql数据库,但是我现在把任务都停掉了。怎么解决?

现在flink-cdc监听mysql数据库,但是我现在把任务都停掉了。我修改mysql数据还能监听到数据同步到sr数据库中?

我看数据库连接确实还存在监听

参考答案:

没kill掉,用命令kill

关于本问题的更多回答可点击进行查看:

https://developer.aliyun.com/ask/579996

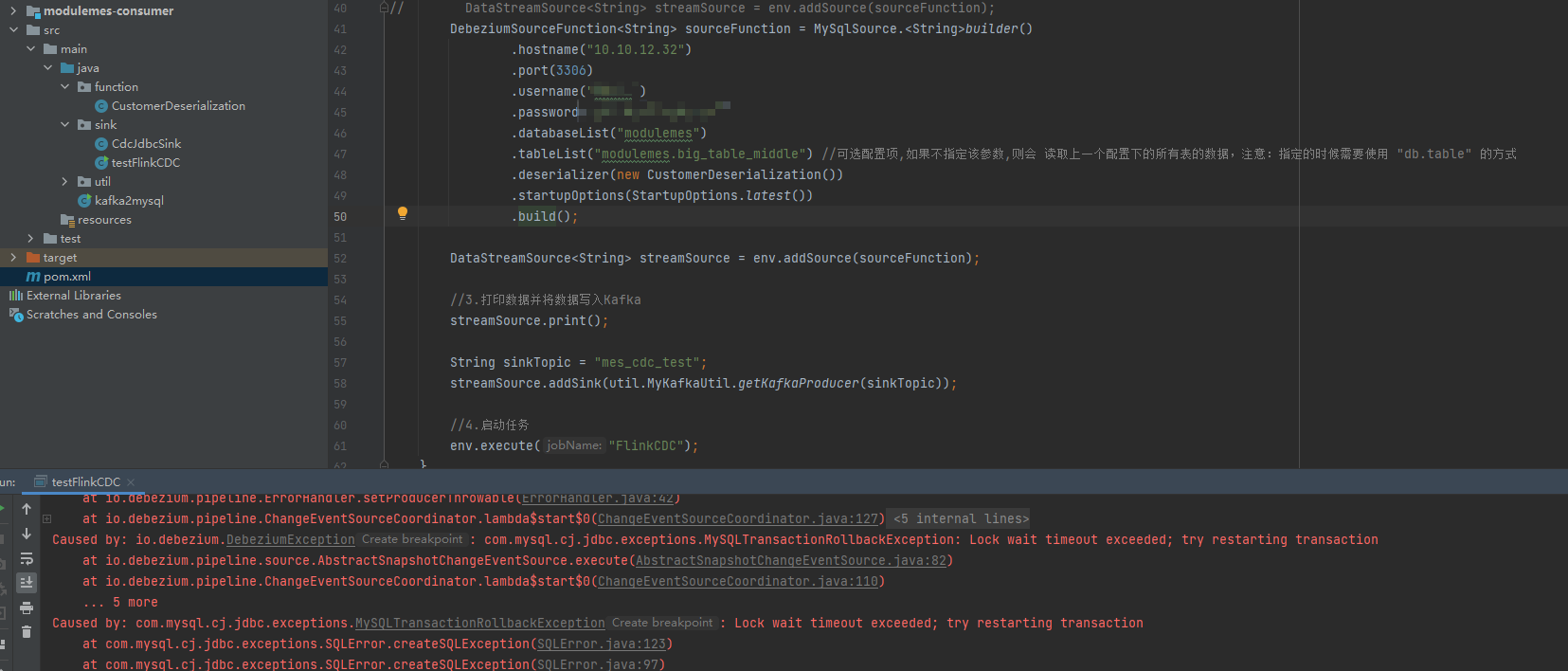

问题五:Flink CDC读取mysql生产库的时候发生了这个情况,请问该怎么处理呢?

Flink CDC读取mysql生产库的时候发生了这个情况,上网查看是因为读锁失败,我尝试了把我的pom文件更改成flink-cdc 2.0 ,但似乎2.0的无锁并没有成功被使用,请问该怎么处理呢?

参考答案:

重新用2.x的构建就行

关于本问题的更多回答可点击进行查看: