一、问题描述

-

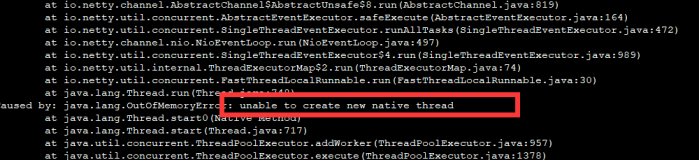

之前因为

java.lang.OutOfMemoryError: unable to create new native thread设置了Xss参数,见http://zouqingyun.blog.51cto.com/782246/1879975 -

nodeManager仍然出现该异常,同时map-reduce的任务中也出现该异常

二、一些现象

跑了一个map-reduce任务,这个任务处理的都是小文件,最后生成了2万多个map任务。这个job中许多任务出现java.lang.OutOfMemoryError: unable to create new native thread,观察了这个job的一些任务,发现这个任务的thread stack持续增长,最后有7000多个thread,最后导致java.lang.OutOfMemoryError: unable to create new native thread,因为每个map任务分配的内存为800m,ThreadStackSize是默认值1024k,最后导致内存耗尽。任务的线程栈中持续一下输出:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

|

"Thread-3689"

daemon prio=10 tid=0x00007fb6bf364000 nid=0x2331

in

Object.wait() [0x00007fb5b9b94000]

java.lang.Thread.State: TIMED_WAITING (on object monitor)

at java.lang.Object.wait(Native Method)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:638)

- locked <0x00000000f89800d0> (a java.util.LinkedList)

"Thread-3688"

daemon prio=10 tid=0x00007fb6bf362000 nid=0x10a9

in

Object.wait() [0x00007fb5b9c95000]

java.lang.Thread.State: TIMED_WAITING (on object monitor)

at java.lang.Object.wait(Native Method)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:638)

- locked <0x00000000f89701c0> (a java.util.LinkedList)

"Thread-3687"

daemon prio=10 tid=0x00007fb6bf35a800 nid=0xf23

in

Object.wait() [0x00007fb5b9d96000]

java.lang.Thread.State: TIMED_WAITING (on object monitor)

at java.lang.Object.wait(Native Method)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:638)

- locked <0x00000000f89681c0> (a java.util.LinkedList)

"Thread-3686"

daemon prio=10 tid=0x00007fb6bf358800 nid=0xde9

in

Object.wait() [0x00007fb5b9e97000]

java.lang.Thread.State: TIMED_WAITING (on object monitor)

at java.lang.Object.wait(Native Method)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:638)

|

三、猜测

1、nodemanager的异常可能与这个有关,当该map-reduce所有任务调度到一台机器(大概40个container),每个container中任务都生成7000个thread(生成很多小文件?)。导致耗尽max user processes(262144)。但nodemanger需要new thread的时候,出现java.lang.OutOfMemoryError: unable to create new native thread。(ps 昨天这个任务确实在定时跑)

2、可能是hadoop/yarn某些地方的内存溢出问题。参见一个类似的问题。https://issues.apache.org/jira/browse/YARN-4581

四、后记

hadoop处理大量小文件,要使用org.apache.hadoop.mapreduce.lib.input.CombineTextInputFormat,并设置mapreduce.input.fileinputformat.split.maxsize = 5147483648