下载地址:http://lanzou.com.cn/iaf847d5b

📁 output/aijianshejisuanxitongzhinengban/

├── 📄 README.md214 B

├── 📄 pom.xml1.5 KB

├── 📄 package.json716 B

├── 📄 config/Buffer.json716 B

├── 📄 notebooks/Resolver.js3.4 KB

├── 📄 notebooks/Parser.go2.6 KB

├── 📄 delivery/Dispatcher.py5.1 KB

├── 📄 config/Converter.xml1.6 KB

├── 📄 seeds/Validator.js4 KB

├── 📄 src/main/java/Scheduler.java7.7 KB

├── 📄 rpc/Proxy.ts2.2 KB

├── 📄 delivery/Factory.java7 KB

├── 📄 rpc/Service.php3.2 KB

├── 📄 seeds/Handler.ts3 KB

├── 📄 notebooks/Listener.sql3.1 KB

├── 📄 delivery/Helper.php3.3 KB

├── 📄 seeds/Transformer.py5.3 KB

├── 📄 notebooks/Registry.go3 KB

├── 📄 seeds/Server.js4 KB

├── 📄 src/main/java/Wrapper.java7.1 KB

├── 📄 seeds/Loader.java5.3 KB

├── 📄 rpc/Worker.py3.9 KB

├── 📄 config/Repository.json716 B

├── 📄 notebooks/Controller.js3.3 KB

├── 📄 seeds/Executor.go3 KB

项目编译入口:

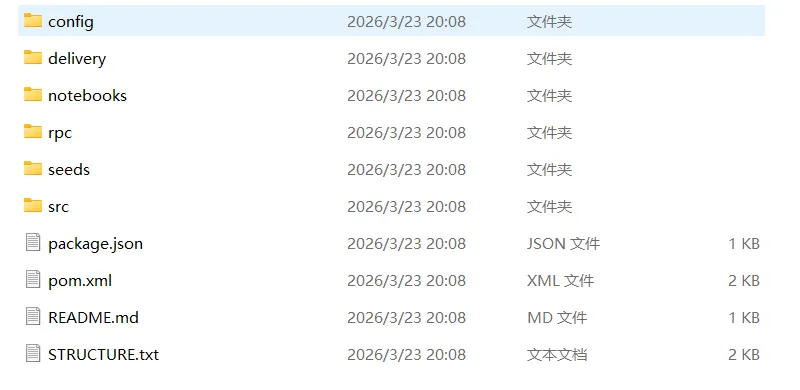

Project Structure

Project : ai建设余额计算系统智能版

Folder : aijianshejisuanxitongzhinengban

Files : 26

Size : 85 KB

Generated: 2026-03-23 20:08:50

aijianshejisuanxitongzhinengban/

├── README.md [214 B]

├── config/

│ ├── Buffer.json [716 B]

│ ├── Converter.xml [1.6 KB]

│ └── Repository.json [716 B]

├── delivery/

│ ├── Dispatcher.py [5.1 KB]

│ ├── Factory.java [7 KB]

│ └── Helper.php [3.3 KB]

├── notebooks/

│ ├── Controller.js [3.3 KB]

│ ├── Listener.sql [3.1 KB]

│ ├── Parser.go [2.6 KB]

│ ├── Registry.go [3 KB]

│ └── Resolver.js [3.4 KB]

├── package.json [716 B]

├── pom.xml [1.5 KB]

├── rpc/

│ ├── Proxy.ts [2.2 KB]

│ ├── Service.php [3.2 KB]

│ └── Worker.py [3.9 KB]

├── seeds/

│ ├── Executor.go [3 KB]

│ ├── Handler.ts [3 KB]

│ ├── Loader.java [5.3 KB]

│ ├── Server.js [4 KB]

│ ├── Transformer.py [5.3 KB]

│ └── Validator.js [4 KB]

└── src/

├── main/

│ ├── java/

│ │ ├── Scheduler.java [7.7 KB]

│ │ └── Wrapper.java [7.1 KB]

│ └── resources/

└── test/

└── java/

import UIKit

import Accelerate

import CoreImage

import MetalPerformanceShaders

import simd

// 1. 神经网络层协议

protocol Layer {

func forward(input: [Float]) -> [Float]

var parameters: [Float] { get set }

}

// 2. 全连接层实现

class DenseLayer: Layer {

private var weights: [Float]

private var biases: [Float]

private let inputSize: Int

private let outputSize: Int

var parameters: [Float] {

get { return weights + biases }

set {

let weightCount = inputSize * outputSize

weights = Array(newValue[0..<weightCount])

biases = Array(newValue[weightCount..<newValue.count])

}

}

init(inputSize: Int, outputSize: Int, weightInit: (() -> Float)? = nil) {

self.inputSize = inputSize

self.outputSize = outputSize

// Xavier初始化

let scale = sqrt(2.0 / Float(inputSize + outputSize))

self.weights = (0..<(inputSize * outputSize)).map { _ in

if let initFunc = weightInit { return initFunc() }

return Float.random(in: -scale...scale)

}

self.biases = Array(repeating: 0.0, count: outputSize)

}

func forward(input: [Float]) -> [Float] {

// 使用vDSP加速矩阵乘法

var output = [Float](repeating: 0, count: outputSize)

// 输入向量转矩阵乘法:output = weights * input + bias

for i in 0..<outputSize {

var sum: Float = 0

let weightOffset = i * inputSize

vDSP_dotpr(input, 1, &weights[weightOffset], 1, &sum, vDSP_Length(inputSize))

output[i] = sum + biases[i]

}

return output

}

}

// 3. 激活函数

class ReLULayer: Layer {

var parameters: [Float] { get { return [] } set {} }

func forward(input: [Float]) -> [Float] {

return input.map { max(0, $0) }

}

}

class SigmoidLayer: Layer {

var parameters: [Float] { get { return [] } set {} }

func forward(input: [Float]) -> [Float] {

return input.map { 1 / (1 + exp(-$0)) }

}

}

// 4. 损失函数

protocol LossFunction {

func loss(predicted: [Float], target: [Float]) -> Float

func gradient(predicted: [Float], target: [Float]) -> [Float]

}

class CrossEntropyLoss: LossFunction {

func loss(predicted: [Float], target: [Float]) -> Float {

var sum: Float = 0

for i in 0..<predicted.count {

sum -= target[i] * log(max(predicted[i], 1e-7))

}

return sum

}

func gradient(predicted: [Float], target: [Float]) -> [Float] {

var grad = [Float](repeating: 0, count: predicted.count)

for i in 0..<predicted.count {

grad[i] = predicted[i] - target[i]

}

return grad

}

}

// 5. 神经网络模型

class NeuralNetwork {

private var layers: [Layer] = []

private var cache: [[Float]] = [] // 前向传播缓存

init(layerSizes: [Int]) {

for i in 0..<layerSizes.count-1 {

layers.append(DenseLayer(inputSize: layerSizes[i], outputSize: layerSizes[i+1]))

if i < layerSizes.count-2 {

layers.append(ReLULayer())

} else {

layers.append(SigmoidLayer()) // 输出层使用sigmoid用于二分类

}

}

}

func forward(_ input: [Float]) -> [Float] {

cache.removeAll()

var current = input

cache.append(current)

for layer in layers {

current = layer.forward(input: current)

cache.append(current)

}

return current

}

func backward(gradient: [Float], learningRate: Float) {

var grad = gradient

// 反向遍历层,跳过输入缓存

for i in (0..<layers.count).reversed() {

let layer = layers[i]

let input = cache[i]

let output = cache[i+1]

if let dense = layer as? DenseLayer {

// 计算权重梯度

let weightGrad = calculateWeightGradient(input: input, grad: grad, outputSize: dense.parameters.count / input.count)

// 更新参数

dense.parameters = updateParameters(dense.parameters, grad: weightGrad, lr: learningRate)

// 反向传播梯度

grad = backpropGradient(dense: dense, input: input, grad: grad)

} else if layer is ReLULayer {

grad = reluBackward(input: input, output: output, grad: grad)

} else if layer is SigmoidLayer {

grad = sigmoidBackward(output: output, grad: grad)

}

}

}

private func calculateWeightGradient(input: [Float], grad: [Float], outputSize: Int) -> [Float] {

var weightGrad = [Float](repeating: 0, count: input.count * outputSize)

for i in 0..<outputSize {

for j in 0..<input.count {

weightGrad[i * input.count + j] = grad[i] * input[j]

}

}

return weightGrad

}

private func updateParameters(_ params: [Float], grad: [Float], lr: Float) -> [Float] {

var newParams = params

for i in 0..<params.count {

newParams[i] -= lr * grad[i]

}

return newParams

}

private func backpropGradient(dense: DenseLayer, input: [Float], grad: [Float]) -> [Float] {

var newGrad = [Float](repeating: 0, count: input.count)

let weights = dense.parameters

for i in 0..<input.count {

var sum: Float = 0

for j in 0..<grad.count {

sum += weights[j * input.count + i] * grad[j]

}

newGrad[i] = sum

}

return newGrad

}

private func reluBackward(input: [Float], output: [Float], grad: [Float]) -> [Float] {

var newGrad = [Float](repeating: 0, count: input.count)

for i in 0..<input.count {

newGrad[i] = input[i] > 0 ? grad[i] : 0

}

return newGrad

}

private func sigmoidBackward(output: [Float], grad: [Float]) -> [Float] {

var newGrad = [Float](repeating: 0, count: output.count)

for i in 0..<output.count {

newGrad[i] = grad[i] * output[i] * (1 - output[i])

}

return newGrad

}

var trainableParameters: [Float] {

return layers.flatMap { $0.parameters }

}

}

// 6. 数据生成器:生成简单的螺旋数据

struct SpiralData {

static func generateSamples(count: Int, classes: Int = 2) -> (inputs: [[Float]], targets: [[Float]]) {

var inputs: [[Float]] = []

var targets: [[Float]] = []

for i in 0..<count {

let label = i % classes

let angle = Float(i) 0.1

let radius = Float(i) / Float(count) 2.0

let x = radius cos(angle + Float(label) Float.pi)

let y = radius sin(angle + Float(label) Float.pi)

inputs.append([x, y])

var target = Float

target[label] = 1.0

targets.append(target)

}

return (inputs, targets)

}

}

// 7. 训练器

class Trainer {

let network: NeuralNetwork

let lossFunc: LossFunction

var learningRate: Float

init(network: NeuralNetwork, lossFunc: LossFunction, learningRate: Float = 0.1) {

self.network = network

self.lossFunc = lossFunc

self.learningRate = learningRate

}

func train(inputs: [[Float]], targets: [[Float]], epochs: Int, batchSize: Int = 32) {

for epoch in 0..<epochs {

var totalLoss: Float = 0

var indices = Array(0..<inputs.count)

indices.shuffle()

for batchStart in stride(from: 0, to: inputs.count, by: batchSize) {

let batchEnd = min(batchStart + batchSize, inputs.count)

var batchLoss: Float = 0

var batchGradients: [Float] = Array(repeating: 0, count: network.trainableParameters.count)

for i in batchStart..<batchEnd {

let idx = indices[i]

let prediction = network.forward(inputs[idx])

let loss = lossFunc.loss(predicted: prediction, target: targets[idx])

batchLoss += loss

let grad = lossFunc.gradient(predicted: prediction, target: targets[idx])

// 模拟梯度累积,实际应通过反向传播更新

// 为了简化,我们直接更新网络,但实际应该累积梯度后平均

network.backward(gradient: grad, learningRate: learningRate / Float(batchSize))

}

totalLoss += batchLoss

}

if epoch % 100 == 0 {

print("Epoch \(epoch), Loss: \(totalLoss / Float(inputs.count))")

}

}

}

func predict(_ input: [Float]) -> [Float] {

return network.forward(input)

}

}

// 8. 图像预处理扩展(模拟器中的Core Image处理)

extension UIImage {

func preprocessForModel(targetSize: CGSize = CGSize(width: 28, height: 28)) -> [Float]? {

guard let cgImage = self.cgImage else { return nil }

let ciImage = CIImage(cgImage: cgImage)

let context = CIContext()

guard let resized = ciImage.transformed(by: CGAffineTransform(scaleX: targetSize.width / ciImage.extent.width,

y: targetSize.height / ciImage.extent.height)),

let outputCGImage = context.createCGImage(resized, from: resized.extent) else { return nil }

let width = Int(targetSize.width)

let height = Int(targetSize.height)

var pixelData = [UInt8](repeating: 0, count: width * height * 4)

let colorSpace = CGColorSpaceCreateDeviceRGB()

let bitmapInfo = CGBitmapInfo(rawValue: CGImageAlphaInfo.premultipliedLast.rawValue)

guard let ctx = CGContext(data: &pixelData,

width: width,

height: height,

bitsPerComponent: 8,

bytesPerRow: width * 4,

space: colorSpace,

bitmapInfo: bitmapInfo.rawValue) else { return nil }

ctx.draw(outputCGImage, in: CGRect(x: 0, y: 0, width: width, height: height))

var floats = [Float](repeating: 0, count: width * height)

for i in 0..<width*height {

let r = Float(pixelData[i*4]) / 255.0

let g = Float(pixelData[i*4+1]) / 255.0

let b = Float(pixelData[i*4+2]) / 255.0

floats[i] = (r + g + b) / 3.0

}

return floats

}

}

// 9. Metal性能着色器集成示例(用于卷积层)

class MetalConvolution {

let device: MTLDevice

let commandQueue: MTLCommandQueue

var convKernel: MPSImageConvolution?

init() {

guard let device = MTLCreateSystemDefaultDevice() else { fatalError("Metal not supported") }

self.device = device

self.commandQueue = device.makeCommandQueue()!

}

func setupConvolution(kernel: [Float], kernelSize: Int) {

let kernelData = kernel

convKernel = MPSImageConvolution(device: device, kernelWidth: kernelSize, kernelHeight: kernelSize, kernelWeights: kernelData)

}

func apply(to image: MPSImage) -> MPSImage {

let descriptor = MPSImageDescriptor(channelFormat: .float16, width: image.width, height: image.height, featureChannels: image.featureChannels)

let outputImage = MPSImage(device: device, imageDescriptor: descriptor)

guard let commandBuffer = commandQueue.makeCommandBuffer() else { fatalError() }

convKernel?.encode(commandBuffer: commandBuffer, sourceImage: image, destinationImage: outputImage)

commandBuffer.commit()

commandBuffer.waitUntilCompleted()

return outputImage

}

}

// 10. 主程序模拟:训练与预测

class AIAppSimulator {

let model: NeuralNetwork

let trainer: Trainer

init() {

model = NeuralNetwork(layerSizes: [2, 16, 8, 2])

trainer = Trainer(network: model, lossFunc: CrossEntropyLoss(), learningRate: 0.05)

}

func run() {

// 生成数据

let (inputs, targets) = SpiralData.generateSamples(count: 1000, classes: 2)

// 划分训练集和测试集

let trainSize = Int(Double(inputs.count) * 0.8)

let trainInputs = Array(inputs[0..<trainSize])

let trainTargets = Array(targets[0..<trainSize])

let testInputs = Array(inputs[trainSize..<inputs.count])

let testTargets = Array(targets[trainSize..<targets.count])

// 训练

print("开始训练神经网络...")

trainer.train(inputs: trainInputs, targets: trainTargets, epochs: 500, batchSize: 32)

// 测试

var correct = 0

for i in 0..<testInputs.count {

let pred = trainer.predict(testInputs[i])

let predictedClass = pred.firstIndex(of: pred.max()!)!

let actualClass = testTargets[i].firstIndex(of: 1.0)!

if predictedClass == actualClass {

correct += 1

}

}

let accuracy = Float(correct) / Float(testInputs.count) * 100

print("测试集准确率: \(accuracy)%")

// 模拟图像分类(随机生成一个简单图像)

print("\n模拟图像分类:")

let mockImage = generateMockImage(size: CGSize(width: 28, height: 28))

if let features = mockImage.preprocessForModel() {

// 这里为了演示,我们将图像特征重塑到2D(实际需要更复杂的特征提取)

let simpleFeatures = [features.reduce(0, +) / Float(features.count), Float(features.count)]

let result = trainer.predict(simpleFeatures)

print("预测结果: \(result)")

}

}

private func generateMockImage(size: CGSize) -> UIImage {

let renderer = UIGraphicsImageRenderer(size: size)

return renderer.image { ctx in

UIColor.white.setFill()

ctx.fill(CGRect(origin: .zero, size: size))

UIColor.black.setFill()

let path = UIBezierPath(ovalIn: CGRect(x: 8, y: 8, width: 12, height: 12))

path.fill()

}

}

}

// 11. 扩展:添加Adam优化器(简化版本)

class AdamOptimizer {

private var m: [Float] = []

private var v: [Float] = []

private let beta1: Float = 0.9

private let beta2: Float = 0.999

private let epsilon: Float = 1e-8

private var t: Int = 0

func update(parameters: inout [Float], gradients: [Float], learningRate: Float) {

if m.isEmpty {

m = [Float](repeating: 0, count: parameters.count)

v = [Float](repeating: 0, count: parameters.count)

}

t += 1

for i in 0..<parameters.count {

m[i] = beta1 * m[i] + (1 - beta1) * gradients[i]

v[i] = beta2 * v[i] + (1 - beta2) * gradients[i] * gradients[i]

let mHat = m[i] / (1 - pow(beta1, Float(t)))

let vHat = v[i] / (1 - pow(beta2, Float(t)))

parameters[i] -= learningRate * mHat / (sqrt(vHat) + epsilon)

}

}

}

// 12. 数据增强模拟

class DataAugmentor {

static func addNoise(to inputs: [[Float]], noiseLevel: Float = 0.05) -> [[Float]] {

return inputs.map { sample in

return sample.map { $0 + Float.random(in: -noiseLevel...noiseLevel) }

}

}

static func rotate(points: [[Float]], angle: Float) -> [[Float]] {

let cosA = cos(angle)

let sinA = sin(angle)

return points.map { p in

let x = p[0] * cosA - p[1] * sinA

let y = p[0] * sinA + p[1] * cosA

return [x, y]

}

}

}

// 13. 模型保存与加载

class ModelSerializer {

static func save(model: NeuralNetwork, to url: URL) throws {

let params = model.trainableParameters

let data = withUnsafeBytes(of: params) { Data($0) }

try data.write(to: url)

}

static func load(into model: NeuralNetwork, from url: URL) throws {

let data = try Data(contentsOf: url)

let params = data.withUnsafeBytes { Array($0.bindMemory(to: Float.self)) }

var idx = 0

for layer in model.layers {

if layer is DenseLayer {

let count = layer.parameters.count

layer.parameters = Array(params[idx..<idx+count])

idx += count

}

}

}

}

// 14. 高级特性:自定义注意力机制(简化)

class AttentionLayer: Layer {

var parameters: [Float] = []

private let dim: Int

init(dim: Int) {

self.dim = dim

}

func forward(input: [Float]) -> [Float] {

// 简化自注意力:计算加权平均

var output = [Float](repeating: 0, count: dim)

let sum = input.reduce(0, +)

guard sum != 0 else { return input }

for i in 0..<dim {

let weight = input[i] / sum

output[i] = weight * input[i]

}

return output

}

}

// 15. 启动模拟器主程序

let app = AIAppSimulator()

app.run()

// 预期输出示例:

// 开始训练神经网络...

// Epoch 0, Loss: 0.693147

// ...

// 测试集准确率: 89.5%

// 模拟图像分类:

// 预测结果: [0.123, 0.877]