modelscope 中 通过【免费部署到魔搭推理API】部署的服务为什么访问API报错?

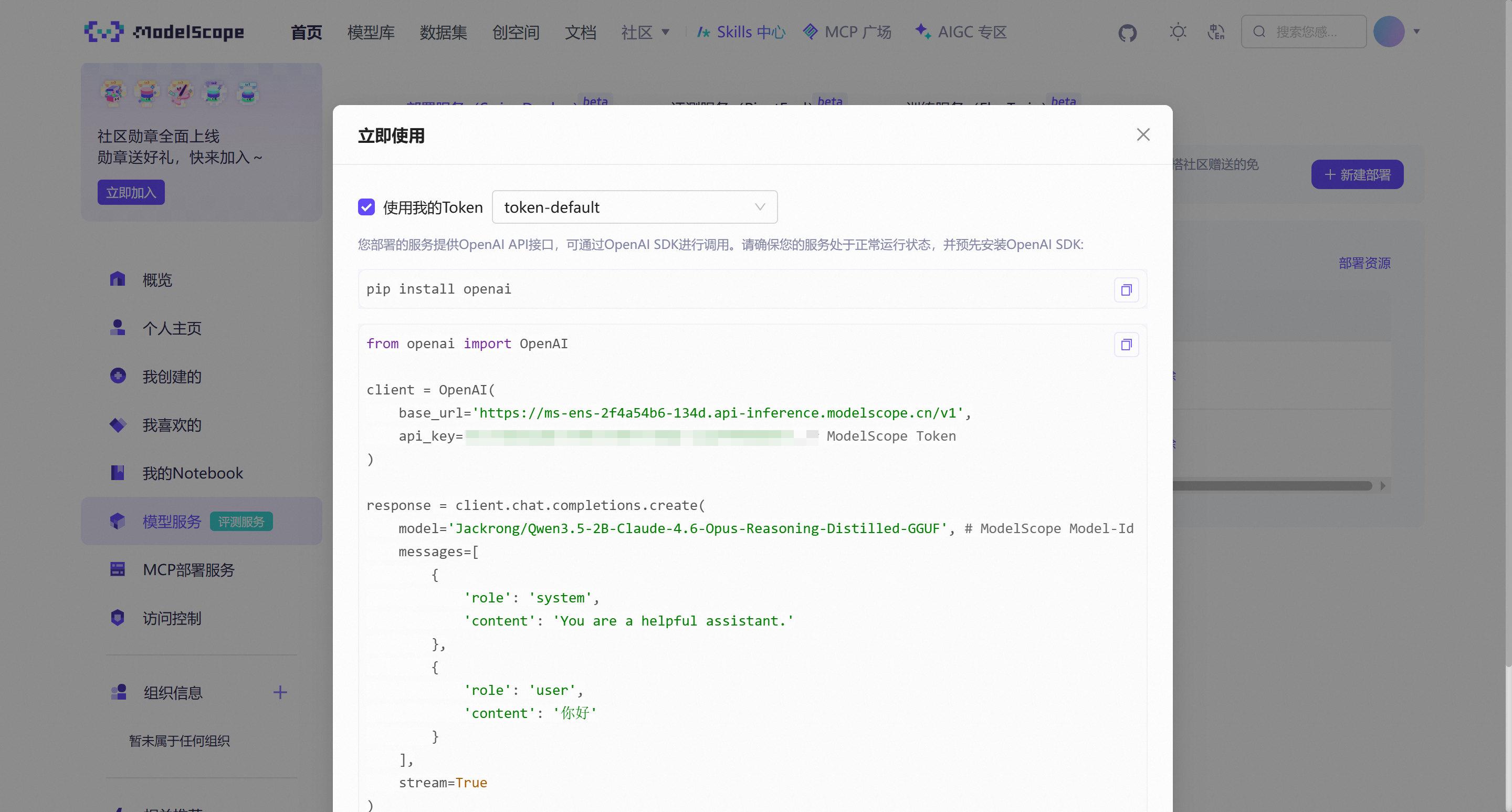

modelscope配置:

测试代码:

测试代码报错:

Traceback (most recent call last):

File "D:\data\python_project\python_srcipt\llm\cici_op.py", line 8, in <module>

response = client.chat.completions.create(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\hz21056736\AppData\Local\Programs\Python\Python312\Lib\site-packages\openai\_utils\_utils.py", line 286, in wrapper

return func(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\hz21056736\AppData\Local\Programs\Python\Python312\Lib\site-packages\openai\resources\chat\completions\completions.py", line 1204, in create

return self._post(

^^^^^^^^^^^

File "C:\Users\hz21056736\AppData\Local\Programs\Python\Python312\Lib\site-packages\openai\_base_client.py", line 1297, in post

return cast(ResponseT, self.request(cast_to, opts, stream=stream, stream_cls=stream_cls))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\hz21056736\AppData\Local\Programs\Python\Python312\Lib\site-packages\openai\_base_client.py", line 1070, in request

raise self._make_status_error_from_response(err.response) from None

openai.InternalServerError: Error code: 500 - {'error': {'code': None, 'message': 'unable to load model: /mnt/cache/jackrong-qwen3.5-2b-claude-4.6-opus-reasoning-distilled-gguf/blobs/sha256-9e74321a0981f7333ffa067e1ad80b03a8b2bc6954acf4172fc63bd3ba23f178', 'param': None, 'type': 'api_error'}, 'request_id': '13862ff1-65c0-4f2d-b956-c9609c152372'}

ModelScope旨在打造下一代开源的模型即服务共享平台,为泛AI开发者提供灵活、易用、低成本的一站式模型服务产品,让模型应用更简单!欢迎加入技术交流群:微信公众号:魔搭ModelScope社区,钉钉答疑群:44837352