"torch.cuda.OutOfMemoryError: CUDA out of memory. Tried to allocate 28.57 GiB (GPU 2; 39.59 GiB total capacity; 33.25 GiB already allocated; 5.06 GiB free; 33.60 GiB reserved in total by PyTorch) If reserved memory is >> allocated memory try setting max_split_size_mb to avoid fragmentation. See documentation for Memory Management and PYTORCH_CUDA_ALLOC_CONF我用这种方式os.environ['CUDA_VISIBLE_DEVICES']='0,1,2,3,4,5,6,7' 指定的ModelScope gpu,但是只用到了一张2卡,怎么修改?

"

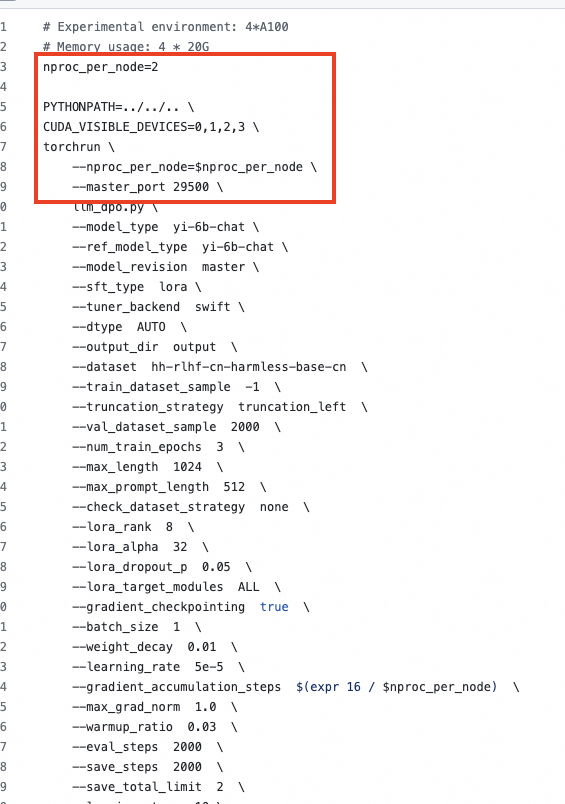

"参考下mp+ddp,https://github.com/modelscope/swift/blob/main/examples/pytorch/llm/scripts/dpo/lora_ddp_mp/dpo.sh

此回答整理自钉群“魔搭ModelScope开发者联盟群 ①”"

ModelScope旨在打造下一代开源的模型即服务共享平台,为泛AI开发者提供灵活、易用、低成本的一站式模型服务产品,让模型应用更简单!欢迎加入技术交流群:微信公众号:魔搭ModelScope社区,钉钉答疑群:44837352