继续执行下一个测试文件(在test45文件夹下面新建一个hello.txt文件并写入数据)

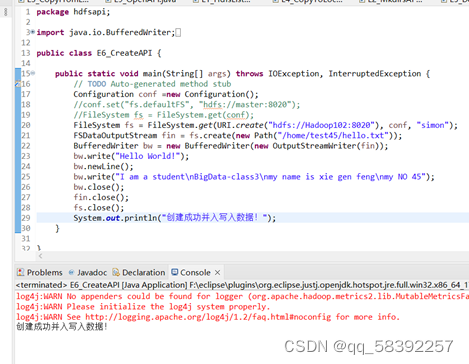

1. package hdfsapi; 2. 3. import java.io.BufferedWriter; 4. import java.io.IOException; 5. import java.io.OutputStreamWriter; 6. import java.net.URI; 7. 8. import org.apache.hadoop.conf.Configuration; 9. import org.apache.hadoop.fs.FSDataOutputStream; 10. import org.apache.hadoop.fs.FileSystem; 11. import org.apache.hadoop.fs.Path; 12. 13. public class E6_CreateAPI { 14. 15. public static void main(String[] args) throws IOException, InterruptedException { 16. // TODO Auto-generated method stub 17. Configuration conf =new Configuration(); 18. //conf.set("fs.defaultFS", "hdfs://master:8020"); 19. //FileSystem fs = FileSystem.get(conf); 20. FileSystem fs = FileSystem.get(URI.create("hdfs://Hadoop102:8020"), conf, "simon"); 21. FSDataOutputStream fin = fs.create(new Path("/home/test45/hello.txt")); 22. BufferedWriter bw = new BufferedWriter(new OutputStreamWriter(fin)); 23. bw.write("Hello World!"); 24. bw.newLine(); 25. bw.write("I am a student\nBigData-class3\nmy name is xie gen feng\nmy NO 45"); 26. bw.close(); 27. fin.close(); 28. fs.close(); 29. System.out.println("创建成功并入写入数据!"); 30. } 31. 32. }

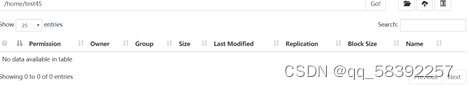

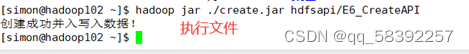

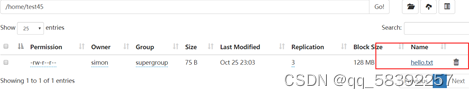

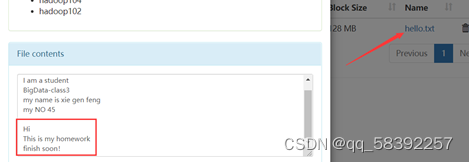

执行成功!查看网页端:

数据写入成功

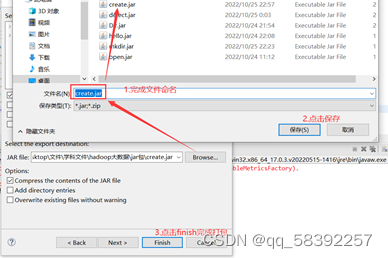

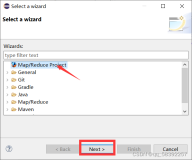

将文件打包

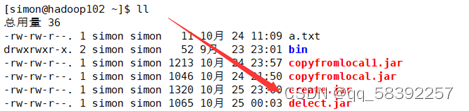

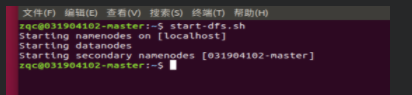

将打好的jar包上传到Linux

删除web端文件验证Linux执行文件

执行后

Web端

打包其他同样的java测试文件

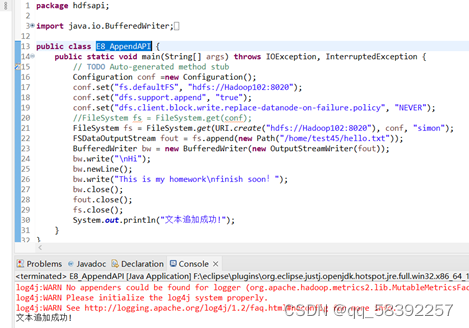

继续验证文本追加

1. package hdfsapi; 2. 3. import java.io.BufferedWriter; 4. import java.io.IOException; 5. import java.io.OutputStreamWriter; 6. import java.net.URI; 7. 8. import org.apache.hadoop.conf.Configuration; 9. import org.apache.hadoop.fs.FSDataOutputStream; 10. import org.apache.hadoop.fs.FileSystem; 11. import org.apache.hadoop.fs.Path; 12. 13. public class E8_AppendAPI { 14. public static void main(String[] args) throws IOException, InterruptedException { 15. // TODO Auto-generated method stub 16. Configuration conf =new Configuration(); 17. conf.set("fs.defaultFS", "hdfs://Hadoop102:8020"); 18. conf.set("dfs.support.append", "true"); 19. conf.set("dfs.client.block.write.replace-datanode-on-failure.policy", "NEVER"); 20. //FileSystem fs = FileSystem.get(conf); 21. FileSystem fs = FileSystem.get(URI.create("hdfs://Hadoop102:8020"), conf, "simon"); 22. FSDataOutputStream fout = fs.append(new Path("/home/test45/hello.txt")); 23. BufferedWriter bw = new BufferedWriter(new OutputStreamWriter(fout)); 24. bw.write("\nHi"); 25. bw.newLine(); 26. bw.write("This is my homework\nfinish soon!"); 27. bw.close(); 28. fout.close(); 29. fs.close(); 30. System.out.println("文本追加成功!"); 31. } 32. }

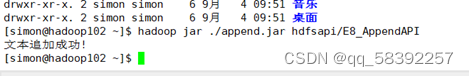

Linux执行jar包

Web端验证数据是否写入成功!

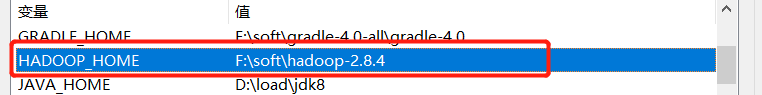

理解HDFS Java API编程原理,同时掌握HDFS的命令,也掌握Elipse远程调试Hadoop程序的方法,使用了HDFS基本的API调用方法,使用eclipse编写java程序操作hdfs并将java文件打成jar包放在Linux上面使用Hadoop命令执行文件