直接使用

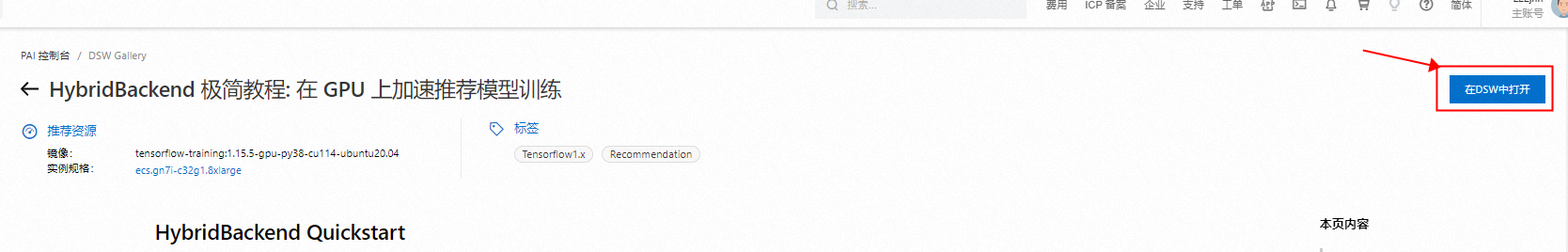

请打开HybridBackend 极简教程: 在 GPU 上加速推荐模型训练,并点击右上角 “ 在DSW中打开” 。

HybridBackend Quickstart

In this tutorial, we use HybridBackend to speed up training of a sample ranking model based on stacked DCNv2 on Taobao ad click datasets.

Why HybridBackend

- Training industrial recommendation models can benefit greatly from GPUs

- Embedding layer becomes wider, consuming up to thousands of feature fields, which requires larger memory bandwidth;

- Feature interaction layer is going deeper by leveraging multiple DNN submodules over different subsets of features, which requires higher computing capability;

- GPUs provide much higher computing capability, larger memory bandwidth, and faster data movement;

- Industrial recommendation models do not take full advantage of the GPU resources by canonical training frameworks

- Industrial recommendation models contain up to a thousand of input feature fields, introducing fragmentary and memory-intensive operations;

- The multiple constituent feature interaction submodules introduce substantial small-sized compute kernels;

- Training framework of industrial recommendation models must be less-invasive and compatible with existing workflow

- Training is only a part of production recommendation system, it needs great effort to modify inference pipeline;

- AI scientists write models in a variety of ways, especially in a big team.

HybridBackend enables speeding up of training industrial recommendation models on GPUs with minimum effort. In this tutorial, you will learn how to use HybridBackend to make training of industrial recommendation models much faster.

See HybridBackend GitHub repo and the paper for more information.

Requirements

- Hardware

- Modern GPU and interconnect (e.g. A10 / PCIe Gen4)

- Fast data storage (e.g. ESSD)

- Software

- Ubuntu 20.04 or above

- Python 3.8 or above

- CUDA 11.4

- TensorFlow 1.15

- TFRecord Format

- Parquet Format

!pip3 install hybridbackend-tf115-cu114

Sample ranking model

In this tutorial, a sample ranking model based on stacked DCNv2 is used. You can see code in ranking for more details.

import os os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' import tensorflow as tf from tensorflow.python.util import module_wrapper as deprecation deprecation._PER_MODULE_WARNING_LIMIT = 0 tf.get_logger().propagate = False from ranking.data import DataSpec from ranking.model import stacked_dcn_v2 from ranking.model import wide_and_deep_features # Global configuration train_max_steps = 100 train_batch_size = 16000 data_spec = DataSpec.read('ranking/taobao/data/spec.json') def train(iterator, embedding_weight_device, dnn_device, hooks): batch = iterator.get_next() batch.pop('ts') labels = tf.reshape(tf.to_float(batch.pop('label')), shape=[-1, 1]) wide_features, deep_features = wide_and_deep_features( batch, data_spec.defaults, data_spec.norms, data_spec.logs, data_spec.embedding_dims, data_spec.embedding_sizes, embedding_weight_device) with tf.device(dnn_device): logits = stacked_dcn_v2( wide_features + deep_features, [1024, 1024, 512, 256, 1]) loss = tf.reduce_mean(tf.keras.losses.binary_crossentropy(labels, logits)) step = tf.train.get_or_create_global_step() opt = tf.train.AdagradOptimizer(learning_rate=0.001) train_op = opt.minimize(loss, global_step=step) hooks.append(tf.train.StepCounterHook(10)) hooks.append(tf.train.StopAtStepHook(train_max_steps)) config = tf.ConfigProto(allow_soft_placement=True) config.gpu_options.allow_growth = True config.gpu_options.force_gpu_compatible = True with tf.train.MonitoredTrainingSession( '', hooks=hooks, config=config) as sess: while not sess.should_stop(): sess.run(train_op)

Training without HybridBackend

Without HybridBackend, training the sample ranking model underutilizes GPUs.

# Download training data in TFRecord format !wget http://easyrec.oss-cn-beijing.aliyuncs.com/data/taobao/day_0.tfrecord

with tf.Graph().as_default(): ds = tf.data.TFRecordDataset('./day_0.tfrecord', compression_type='GZIP') ds = ds.batch(train_batch_size, drop_remainder=True) ds = ds.map( lambda batch: tf.io.parse_example(batch, data_spec.to_example_spec())) ds = ds.prefetch(2) iterator = tf.data.make_one_shot_iterator(ds) with tf.device('/gpu:0'): train(iterator, '/cpu:0', '/gpu:0', [])

Training with HybridBackend

By just one-line importing, HybridBackend uses packing and interleaving to speed up embedding layers dramatically and automatically.

# Note: Once HybridBackend is on, you need to restart notebook to turn it off. import hybridbackend.tensorflow as hb # Exact same code except HybridBackend is on. with tf.Graph().as_default(): ds = tf.data.TFRecordDataset('./day_0.tfrecord', compression_type='GZIP') ds = ds.batch(train_batch_size, drop_remainder=True) ds = ds.map( lambda batch: tf.io.parse_example(batch, data_spec.to_example_spec())) ds = ds.prefetch(2) iterator = tf.data.make_one_shot_iterator(ds) with tf.device('/gpu:0'): train(iterator, '/cpu:0', '/gpu:0', [])

Training with HybridBackend (Optimized data pipeline)

Even greater training performance gains can be archived if we use optimized data pipeline provided by HybridBackend.

# Download training data in Parquet format !wget http://easyrec.oss-cn-beijing.aliyuncs.com/data/taobao/day_0.parquet

# Note: Once HybridBackend is on, you need to restart notebook to turn it off. import hybridbackend.tensorflow as hb with tf.Graph().as_default(): ds = hb.data.ParquetDataset( './day_0.parquet', batch_size=train_batch_size, num_parallel_parser_calls=tf.data.experimental.AUTOTUNE, drop_remainder=True) ds = ds.apply(hb.data.to_sparse()) ds = ds.prefetch(2) iterator = tf.data.make_one_shot_iterator(ds) with tf.device('/gpu:0'): iterator = hb.data.Iterator(iterator, 2) train(iterator, '/cpu:0', '/gpu:0', [hb.data.Iterator.Hook()])